1、由于安装完gitlab需要用到ingress-controller服务,所以这里先部署他

创建yaml文件aliyun-ingress-nginx.yaml

```

apiVersion: v1

kind: Namespace

metadata:

name: ingress-nginx

labels:

app: ingress-nginx

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: nginx-ingress-controller

labels:

app: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

- namespaces

- services

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- configmaps

resourceNames:

- "ingress-controller-leader-nginx"

verbs:

- get

- update

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: nginx-ingress-controller

labels:

app: ingress-nginx

roleRee:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: nginx-ingress-controller

subjects:

- kind: ServiceAccount

name: nginx-ingress-controller

namespace: ingress-nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app: ingress-nginx

name: nginx-ingress-lb

namespace: ingress-nginx

spec:

# DaemonSet need:

# ----------------

type: ClusterIP

# ----------------

# Deployment need:

# ----------------

# type: NodePort

# ----------------

ports:

- name: http

port: 80

targetPort: 80

protocol: TCP

- name: https

port: 443

targetPort: 443

protocol: TCP

- name: metrics

port: 10254

protocol: TCP

targetPort: 10254

selector:

app: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: nginx-configuration

namespace: ingress-nginx

labels:

app: ingress-nginx

data:

keep-alive: "75"

keep-alive-requests: "100"

upstream-keepalive-connections: "10000"

upstream-keepalive-requests: "100"

upstream-keepalive-timeout: "60"

allow-backend-server-header: "true"

enable-underscores-in-headers: "true"

generate-request-id: "true"

http-redirect-code: "301"

ignore-invalid-headers: "true"

log-format-upstream: '{"@timestamp": "$time_iso8601","remote_addr": "$remote_addr","x-forward-for": "$proxy_add_x_forwarded_for","request_id": "$req_id","remote_user": "$remote_user","bytes_sent": $bytes_sent,"request_time": $request_time,"status": $status,"vhost": "$host","request_proto": "$server_protocol","path": "$uri","request_query": "$args","request_length": $request_length,"duration": $request_time,"method": "$request_method","http_referrer": "$http_referer","http_user_agent": "$http_user_agent","upstream-sever":"$proxy_upstream_name","proxy_alternative_upstream_name":"$proxy_alternative_upstream_name","upstream_addr":"$upstream_addr","upstream_response_length":$upstream_response_length,"upstream_response_time":$upstream_response_time,"upstream_status":$upstream_status}'

max-worker-connections: "65536"

worker-processes: "2"

proxy-body-size: 20m

proxy-connect-timeout: "10"

proxy_next_upstream: error timeout http_502

reuse-port: "true"

server-tokens: "false"

ssl-ciphers: ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-AES256-GCM-SHA384:DHE-RSA-AES128-GCM-SHA256:DHE-DSS-AES128-GCM-SHA256:kEDH+AESGCM:ECDHE-RSA-AES128-SHA256:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA:ECDHE-ECDSA-AES128-SHA:ECDHE-RSA-AES256-SHA384:ECDHE-ECDSA-AES256-SHA384:ECDHE-RSA-AES256-SHA:ECDHE-ECDSA-AES256-SHA:DHE-RSA-AES128-SHA256:DHE-RSA-AES128-SHA:DHE-DSS-AES128-SHA256:DHE-RSA-AES256-SHA256:DHE-DSS-AES256-SHA:DHE-RSA-AES256-SHA:AES128-GCM-SHA256:AES256-GCM-SHA384:AES128-SHA256:AES256-SHA256:AES128-SHA:AES256-SHA:AES:CAMELLIA:DES-CBC3-SHA:!aNULL:!eNULL:!EXPORT:!DES:!RC4:!MD5:!PSK:!aECDH:!EDH-DSS-DES-CBC3-SHA:!EDH-RSA-DES-CBC3-SHA:!KRB5-DES-CBC3-SHA

ssl-protocols: TLSv1 TLSv1.1 TLSv1.2

ssl-redirect: "false"

worker-cpu-affinity: auto

---

kind: ConfigMap

apiVersion: v1

metadata:

name: tcp-services

namespace: ingress-nginx

labels:

app: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: udp-services

namespace: ingress-nginx

labels:

app: ingress-nginx

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app: ingress-nginx

annotations:

component.version: "v0.30.0"

component.revision: "v1"

spec:

# Deployment need:

# ----------------

# replicas: 1

# ----------------

selector:

matchLabels:

app: ingress-nginx

template:

metadata:

labels:

app: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

scheduler.alpha.kubernetes.io/critical-pod: ""

spec:

# DaemonSet need:

# ----------------

hostNetwork: true

# ----------------

serviceAccountName: nginx-ingress-controller

priorityClassName: system-node-critical

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- podAffinityTerm:

labelSelector:

matchExpressions:

- key: app

operator: In

values:

- ingress-nginx

topologyKey: kubernetes.io/hostname

weight: 100

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: type

operator: NotIn

values:

- virtual-kubelet

containers:

- name: nginx-ingress-controller

image: registry.cn-beijing.aliyuncs.com/acs/aliyun-ingress-controller:v0.30.0.2-9597b3685-aliyun

args:

- /nginx-ingress-controller

- --configmap=$(POD_NAMESPACE)/nginx-configuration

- --tcp-services-configmap=$(POD_NAMESPACE)/tcp-services

- --udp-services-configmap=$(POD_NAMESPACE)/udp-services

- --publish-service=$(POD_NAMESPACE)/nginx-ingress-lb

- --annotations-prefix=nginx.ingress.kubernetes.io

- --enable-dynamic-certificates=true

- --v=2

securityContext:

allowPrivilegeEscalation: true

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

runAsUser: 101

env:

- name: POD_NAME

valueFrom:

fieldRee:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRee:

fieldPath: metadata.namespace

ports:

- name: http

containerPort: 80

- name: https

containerPort: 443

livenessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

# resources:

# limits:

# cpu: "1"

# memory: 2Gi

# requests:

# cpu: "1"

# memory: 2Gi

volumeMounts:

- mountPath: /etc/localtime

name: localtime

readOnly: true

volumes:

- name: localtime

hostPath:

path: /etc/localtime

type: File

nodeSelector:

boge/ingress-controller-ready: "true"

tolerations:

- operator: Exists

initContainers:

- command:

- /bin/sh

- -c

- |

mount -o remount rw /proc/sys

sysctl -w net.core.somaxconn=65535

sysctl -w net.ipv4.ip_local_port_range="1024 65535"

sysctl -w fs.file-max=1048576

sysctl -w fs.inotify.max_user_instances=16384

sysctl -w fs.inotify.max_user_watches=524288

sysctl -w fs.inotify.max_queued_events=16384

image: registry.cn-beijing.aliyuncs.com/acs/busybox:v1.29.2

imagePullPolicy: Always

name: init-sysctl

securityContext:

privileged: true

procMount: Default

---

## Deployment need for aliyun'k8s:

#apiVersion: v1

#kind: Service

#metadata:

# annotations:

# service.beta.kubernetes.io/alibaba-cloud-loadbalancer-id: "lb-xxxxxxxxxxxxxxxxxxx"

# service.beta.kubernetes.io/alibaba-cloud-loadbalancer-force-override-listeners: "true"

# labels:

# app: nginx-ingress-lb

# name: nginx-ingress-lb-local

# namespace: ingress-nginx

#spec:

# externalTrafficPolicy: Local

# ports:

# - name: http

# port: 80

# protocol: TCP

# targetPort: 80

# - name: https

# port: 443

# protocol: TCP

# targetPort: 443

# selector:

# app: ingress-nginx

# type: LoadBalancer

```

开始部署服务

```

# kubectl apply -f aliyun-ingress-nginx.yaml

namespace/ingress-nginx created

serviceaccount/nginx-ingress-controller created

clusterrole.rbac.authorization.k8s.io/nginx-ingress-controller created

clusterrolebinding.rbac.authorization.k8s.io/nginx-ingress-controller created

service/nginx-ingress-lb created

configmap/nginx-configuration created

configmap/tcp-services created

configmap/udp-services created

daemonset.apps/nginx-ingress-controller created

# 我们查看下pod,会发现空空如也,为什么会这样呢?

# kubectl -n ingress-nginx get pod

注意上面的yaml配置里面,我使用了节点选择配置,只有打了我指定lable标签的node节点,也会被允许调度pod上去运行

nodeSelector:

boge/ingress-controller-ready: "true"

# 我们现在来打标签

# kubectl label node 10.4.7.111 boge/ingress-controller-ready=true

node/10.4.7.111 labeled

# kubectl label node 10.4.7.112 boge/ingress-controller-ready=true

node/10.4.7.112 labeled

```

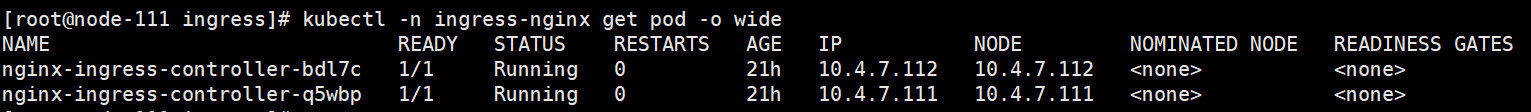

再次查看就正常了

- 空白目录

- k8s

- k8s介绍和架构图

- 硬件环境和准备工作

- bind9-DNS服务部署

- 私有仓库harbor部署

- k8s-etcd部署

- api-server部署

- 配置apiserver L4代理

- controller-manager部署

- kube-scheduler部署

- node节点kubelet 部署

- node节点kube-proxy部署

- cfss-certinfo使用

- k8s网络-Flannel部署

- k8s网络优化

- CoreDNS部署

- k8s服务暴露之ingress

- 常用命令记录

- k8s-部署dashboard服务

- K8S平滑升级

- k8s服务交付

- k8s交付dubbo服务

- 服务架构图

- zookeeper服务部署

- Jenkins服务+共享存储nfs部署

- 安装配置maven和java运行时环境的底包镜像

- 使用blue ocean流水线构建镜像

- K8S生态--交付prometheus监控

- 介绍

- 部署4个exporter

- 部署prometheus server

- 部署grafana

- alert告警部署

- 日志收集ELK

- 制作Tomcat镜像

- 部署ElasticSearch

- 部署kafka和kafka-manager

- filebeat镜像制作

- 部署logstash

- 部署Kibana

- Apollo交付到Kubernetes集群

- Apollo简介

- 交付apollo-configservice

- 交付apollo-adminservice

- 交付apollo-portal

- k8s-CICD

- 集群整体架构

- 集群安装

- harbor仓库和nfs部署

- nginx-ingress-controller服务部署

- gitlab服务部署

- gitlab服务优化

- gitlab-runner部署

- dind服务部署

- CICD自动化服务devops演示

- k8s上服务日志收集