# 如何制定多站点多元空气污染时间序列预测的基线预测

> 原文: [https://machinelearningmastery.com/how-to-develop-baseline-forecasts-for-multi-site-multivariate-air-pollution-time-series-forecasting/](https://machinelearningmastery.com/how-to-develop-baseline-forecasts-for-multi-site-multivariate-air-pollution-time-series-forecasting/)

实时世界时间序列预测具有挑战性,其原因不仅限于问题特征,例如具有多个输入变量,需要预测多个时间步骤,以及需要对多个物理站点执行相同类型的预测。

EMC Data Science Global Hackathon 数据集或简称“_ 空气质量预测 _”数据集描述了多个站点的天气状况,需要预测随后三天的空气质量测量结果。

使用新的时间序列预测数据集时,重要的第一步是开发模型表现基线,通过该基线可以比较所有其他更复杂策略的技能。基线预测策略简单快捷。它们被称为“朴素”策略,因为它们对特定预测问题的假设很少或根本没有。

在本教程中,您将了解如何为多步骤多变量空气污染时间序列预测问题开发朴素的预测方法。

完成本教程后,您将了解:

* 如何开发用于评估大气污染数据集预测策略的测试工具。

* 如何开发使用整个训练数据集中的数据的全球朴素预测策略。

* 如何开发使用来自预测的特定区间的数据的本地朴素预测策略。

让我们开始吧。

如何制定多站点多元空气污染时间序列预测的基准预测

照片由 [DAVID HOLT](https://www.flickr.com/photos/zongo/38524476520/) ,保留一些权利。

## 教程概述

本教程分为六个部分;他们是:

* 问题描述

* 朴素的方法

* 模型评估

* 全球朴素的方法

* 大块朴素的方法

* 结果摘要

## 问题描述

空气质量预测数据集描述了多个地点的天气状况,需要预测随后三天的空气质量测量结果。

具体而言,对于多个站点,每小时提供 8 天的温度,压力,风速和风向等天气观测。目标是预测未来 3 天在多个地点的空气质量测量。预测的提前期不是连续的;相反,必须在 72 小时预测期内预测特定提前期。他们是:

```py

+1, +2, +3, +4, +5, +10, +17, +24, +48, +72

```

此外,数据集被划分为不相交但连续的数据块,其中 8 天的数据随后是需要预测的 3 天。

并非所有站点或块都可以获得所有观察结果,并且并非所有站点和块都可以使用所有输出变量。必须解决大部分缺失数据。

该数据集被用作 2012 年 Kaggle 网站上[短期机器学习竞赛](https://www.kaggle.com/c/dsg-hackathon)(或黑客马拉松)的基础。

根据从参与者中扣留的真实观察结果评估竞赛的提交,并使用平均绝对误差(MAE)进行评分。提交要求在由于缺少数据而无法预测的情况下指定-1,000,000 的值。实际上,提供了一个插入缺失值的模板,并且要求所有提交都采用(模糊的是什么)。

获胜者在滞留测试集([私人排行榜](https://www.kaggle.com/c/dsg-hackathon/leaderboard))上使用随机森林在滞后观察中获得了 0.21058 的 MAE。该帖子中提供了此解决方案的说明:

* [把所有东西都扔进随机森林:Ben Hamner 赢得空气质量预测黑客马拉松](http://blog.kaggle.com/2012/05/01/chucking-everything-into-a-random-forest-ben-hamner-on-winning-the-air-quality-prediction-hackathon/),2012。

在本教程中,我们将探索如何为可用作基线的问题开发朴素预测,以确定模型是否具有该问题的技能。

## 朴素的预测方法

预测表现的基线提供了一个比较点。

它是您问题的所有其他建模技术的参考点。如果模型达到或低于基线的表现,则应该修复或放弃该技术。

用于生成预测以计算基准表现的技术必须易于实现,并且不需要特定于问题的细节。原则是,如果复杂的预测方法不能胜过使用很少或没有特定问题信息的模型,那么它就没有技巧。

可以并且应该首先使用与问题无关的预测方法,然后是使用少量特定于问题的信息的朴素方法。

可以使用的两个与问题无关的朴素预测方法的例子包括:

* 保留每个系列的最后观察值。

* 预测每个系列的观测值的平均值。

数据被分成时间块或间隔。每个时间块都有多个站点的多个变量来预测。持久性预测方法在数据组织的这个组块级别是有意义的。

可以探索其他持久性方法;例如:

* 每个系列的接下来三天预测前一天的观察结果。

* 每个系列的接下来三天预测前三天的观察结果。

这些是需要探索的理想基线方法,但是大量缺失数据和大多数数据块的不连续结构使得在没有非平凡数据准备的情况下实施它们具有挑战性。

可以进一步详细说明预测每个系列的平均观测值;例如:

* 预测每个系列的全局(跨块)平均值。

* 预测每个系列的本地(块内)平均值。

每个系列都需要三天的预测,具有不同的开始时间,例如:一天的时间。因此,每个块的预测前置时间将在一天中的不同时段下降。

进一步详细说明预测平均值是为了纳入正在预测的一天中的小时数;例如:

* 预测每个预测提前期的一小时的全球(跨块)平均值。

* 预测每个预测提前期的当天(当地块)平均值。

在多个站点测量许多变量;因此,可以跨系列使用信息,例如计算预测提前期的每小时平均值或平均值。这些很有意思,但可能会超出朴素的使命。

这是一个很好的起点,尽管可能会进一步详细阐述您可能想要考虑和探索的朴素方法。请记住,目标是使用非常少的问题特定信息来开发预测基线。

总之,我们将研究针对此问题的五种不同的朴素预测方法,其中最好的方法将提供表现的下限,通过该方法可以比较其他模型。他们是:

1. 每个系列的全球平均价值

2. 每个系列的预测提前期的全球平均值

3. 每个系列的本地持久价值

4. 每个系列的本地平均值

5. 每个系列的预测提前期的当地平均值

## 模型评估

在我们评估朴素的预测方法之前,我们必须开发一个测试工具。

这至少包括如何准备数据以及如何评估预测。

### 加载数据集

第一步是下载数据集并将其加载到内存中。

数据集可以从 Kaggle 网站免费下载。您可能必须创建一个帐户并登录才能下载数据集。

下载整个数据集,例如“_ 将所有 _”下载到您的工作站,并使用名为' _AirQualityPrediction_ '的文件夹解压缩当前工作目录中的存档。

* [EMC 数据科学全球黑客马拉松(空气质量预测)数据](https://www.kaggle.com/c/dsg-hackathon/data)

我们的重点将是包含训练数据集的' _TrainingData.csv_ '文件,特别是块中的数据,其中每个块是八个连续的观察日和目标变量。

我们可以使用 Pandas [read_csv()函数](https://pandas.pydata.org/pandas-docs/stable/generated/pandas.read_csv.html)将数据文件加载到内存中,并在第 0 行指定标题行。

```py

# load dataset

dataset = read_csv('AirQualityPrediction/TrainingData.csv', header=0)

```

我们可以通过'chunkID'变量(列索引 1)对数据进行分组。

首先,让我们获取唯一的块标识符列表。

```py

chunk_ids = unique(values[:, 1])

```

然后,我们可以收集每个块标识符的所有行,并将它们存储在字典中以便于访问。

```py

chunks = dict()

# sort rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks[chunk_id] = values[selection, :]

```

下面定义了一个名为 _to_chunks()_ 的函数,它接受加载数据的 NumPy 数组,并将 _chunk_id_ 的字典返回到块的行。

```py

# split the dataset by 'chunkID', return a dict of id to rows

def to_chunks(values, chunk_ix=1):

chunks = dict()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks[chunk_id] = values[selection, :]

return chunks

```

下面列出了加载数据集并将其拆分为块的完整示例。

```py

# load data and split into chunks

from numpy import unique

from pandas import read_csv

# split the dataset by 'chunkID', return a dict of id to rows

def to_chunks(values, chunk_ix=1):

chunks = dict()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks[chunk_id] = values[selection, :]

return chunks

# load dataset

dataset = read_csv('AirQualityPrediction/TrainingData.csv', header=0)

# group data by chunks

values = dataset.values

chunks = to_chunks(values)

print('Total Chunks: %d' % len(chunks))

```

运行该示例将打印数据集中的块数。

```py

Total Chunks: 208

```

### 数据准备

既然我们知道如何加载数据并将其拆分成块,我们就可以将它们分成训练和测试数据集。

尽管每个块内的实际观测数量可能差异很大,但每个块的每小时观察间隔为 8 天。

我们可以将每个块分成前五天的训练观察和最后三天的测试。

每个观察都有一行称为' _position_within_chunk_ ',从 1 到 192(8 天* 24 小时)不等。因此,我们可以将此列中值小于或等于 120(5 * 24)的所有行作为训练数据,将任何大于 120 的值作为测试数据。

此外,任何在训练或测试分割中没有任何观察的块都可以被丢弃,因为不可行。

在使用朴素模型时,我们只对目标变量感兴趣,而不对输入的气象变量感兴趣。因此,我们可以删除输入数据,并使训练和测试数据仅包含每个块的 39 个目标变量,以及块和观察时间内的位置。

下面的 _split_train_test()_ 函数实现了这种行为;给定一个块的字典,它将每个分成训练和测试块数据。

```py

# split each chunk into train/test sets

def split_train_test(chunks, row_in_chunk_ix=2):

train, test = list(), list()

# first 5 days of hourly observations for train

cut_point = 5 * 24

# enumerate chunks

for k,rows in chunks.items():

# split chunk rows by 'position_within_chunk'

train_rows = rows[rows[:,row_in_chunk_ix] <= cut_point, :]

test_rows = rows[rows[:,row_in_chunk_ix] > cut_point, :]

if len(train_rows) == 0 or len(test_rows) == 0:

print('>dropping chunk=%d: train=%s, test=%s' % (k, train_rows.shape, test_rows.shape))

continue

# store with chunk id, position in chunk, hour and all targets

indices = [1,2,5] + [x for x in range(56,train_rows.shape[1])]

train.append(train_rows[:, indices])

test.append(test_rows[:, indices])

return train, test

```

我们不需要整个测试数据集;相反,我们只需要在三天时间内的特定提前期进行观察,特别是提前期:

```py

+1, +2, +3, +4, +5, +10, +17, +24, +48, +72

```

其中,每个提前期相对于训练期结束。

首先,我们可以将这些提前期放入函数中以便于参考:

```py

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2 ,3, 4, 5, 10, 17, 24, 48, 72]

```

接下来,我们可以将测试数据集缩减为仅在首选提前期的数据。

我们可以通过查看' _position_within_chunk_ '列并使用提前期作为距离训练数据集末尾的偏移量来实现,例如: 120 + 1,120 +2 等

如果我们在测试集中找到匹配的行,则保存它,否则生成一行 NaN 观测值。

下面的函数 _to_forecasts()_ 实现了这一点,并为每个块的每个预测提前期返回一行 NumPy 数组。

```py

# convert the rows in a test chunk to forecasts

def to_forecasts(test_chunks, row_in_chunk_ix=1):

# get lead times

lead_times = get_lead_times()

# first 5 days of hourly observations for train

cut_point = 5 * 24

forecasts = list()

# enumerate each chunk

for rows in test_chunks:

chunk_id = rows[0, 0]

# enumerate each lead time

for tau in lead_times:

# determine the row in chunk we want for the lead time

offset = cut_point + tau

# retrieve data for the lead time using row number in chunk

row_for_tau = rows[rows[:,row_in_chunk_ix]==offset, :]

# check if we have data

if len(row_for_tau) == 0:

# create a mock row [chunk, position, hour] + [nan...]

row = [chunk_id, offset, nan] + [nan for _ in range(39)]

forecasts.append(row)

else:

# store the forecast row

forecasts.append(row_for_tau[0])

return array(forecasts)

```

我们可以将所有这些组合在一起并将数据集拆分为训练集和测试集,并将结果保存到新文件中。

完整的代码示例如下所示。

```py

# split data into train and test sets

from numpy import unique

from numpy import nan

from numpy import array

from numpy import savetxt

from pandas import read_csv

# split the dataset by 'chunkID', return a dict of id to rows

def to_chunks(values, chunk_ix=1):

chunks = dict()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks[chunk_id] = values[selection, :]

return chunks

# split each chunk into train/test sets

def split_train_test(chunks, row_in_chunk_ix=2):

train, test = list(), list()

# first 5 days of hourly observations for train

cut_point = 5 * 24

# enumerate chunks

for k,rows in chunks.items():

# split chunk rows by 'position_within_chunk'

train_rows = rows[rows[:,row_in_chunk_ix] <= cut_point, :]

test_rows = rows[rows[:,row_in_chunk_ix] > cut_point, :]

if len(train_rows) == 0 or len(test_rows) == 0:

print('>dropping chunk=%d: train=%s, test=%s' % (k, train_rows.shape, test_rows.shape))

continue

# store with chunk id, position in chunk, hour and all targets

indices = [1,2,5] + [x for x in range(56,train_rows.shape[1])]

train.append(train_rows[:, indices])

test.append(test_rows[:, indices])

return train, test

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2 ,3, 4, 5, 10, 17, 24, 48, 72]

# convert the rows in a test chunk to forecasts

def to_forecasts(test_chunks, row_in_chunk_ix=1):

# get lead times

lead_times = get_lead_times()

# first 5 days of hourly observations for train

cut_point = 5 * 24

forecasts = list()

# enumerate each chunk

for rows in test_chunks:

chunk_id = rows[0, 0]

# enumerate each lead time

for tau in lead_times:

# determine the row in chunk we want for the lead time

offset = cut_point + tau

# retrieve data for the lead time using row number in chunk

row_for_tau = rows[rows[:,row_in_chunk_ix]==offset, :]

# check if we have data

if len(row_for_tau) == 0:

# create a mock row [chunk, position, hour] + [nan...]

row = [chunk_id, offset, nan] + [nan for _ in range(39)]

forecasts.append(row)

else:

# store the forecast row

forecasts.append(row_for_tau[0])

return array(forecasts)

# load dataset

dataset = read_csv('AirQualityPrediction/TrainingData.csv', header=0)

# group data by chunks

values = dataset.values

chunks = to_chunks(values)

# split into train/test

train, test = split_train_test(chunks)

# flatten training chunks to rows

train_rows = array([row for rows in train for row in rows])

# print(train_rows.shape)

print('Train Rows: %s' % str(train_rows.shape))

# reduce train to forecast lead times only

test_rows = to_forecasts(test)

print('Test Rows: %s' % str(test_rows.shape))

# save datasets

savetxt('AirQualityPrediction/naive_train.csv', train_rows, delimiter=',')

savetxt('AirQualityPrediction/naive_test.csv', test_rows, delimiter=',')

```

运行该示例首先评论了从数据集中移除了块 69 以获得不足的数据。

然后我们可以看到每个训练和测试集中有 42 列,一个用于块 ID,块内位置,一天中的小时和 39 个训练变量。

我们还可以看到测试数据集的显着缩小版本,其中行仅在预测前置时间。

新的训练和测试数据集分别保存在' _naive_train.csv_ '和' _naive_test.csv_ '文件中。

```py

>dropping chunk=69: train=(0, 95), test=(28, 95)

Train Rows: (23514, 42)

Test Rows: (2070, 42)

```

### 预测评估

一旦做出预测,就需要对它们进行评估。

在评估预测时,使用更简单的格式会很有帮助。例如,我们将使用 _[chunk] [变量] [时间]_ 的三维结构,其中变量是从 0 到 38 的目标变量数,time 是从 0 到 9 的提前期索引。

模型有望以这种格式进行预测。

我们还可以重新构建测试数据集以使此数据集进行比较。下面的 _prepare_test_forecasts()_ 函数实现了这一点。

```py

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

```

我们将使用平均绝对误差或 MAE 来评估模型。这是在竞争中使用的度量,并且在给定目标变量的非高斯分布的情况下是合理的选择。

如果提前期不包含测试集中的数据(例如 _NaN_ ),则不会计算该预测的错误。如果提前期确实在测试集中有数据但预测中没有数据,那么观察的全部大小将被视为错误。最后,如果测试集具有观察值并进行预测,则绝对差值将被记录为误差。

_calculate_error()_ 函数实现这些规则并返回给定预测的错误。

```py

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

```

错误在所有块和所有提前期之间求和,然后取平均值。

将计算总体 MAE,但我们还将计算每个预测提前期的 MAE。这通常有助于模型选择,因为某些模型在不同的提前期可能会有不同的表现。

下面的 evaluate_forecasts()函数实现了这一点,计算了 _[chunk] [variable] [time]_ 格式中提供的预测和期望值的 MAE 和每个引导时间 MAE。

```py

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

```

一旦我们对模型进行评估,我们就可以呈现它。

下面的 _summarize_error()_ 函数首先打印模型表现的一行摘要,然后创建每个预测提前期的 MAE 图。

```py

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

```

我们现在准备开始探索朴素预测方法的表现。

## 全球朴素的方法

在本节中,我们将探索使用训练数据集中所有数据的朴素预测方法,而不是约束我们正在进行预测的块。

我们将看两种方法:

* 预测每个系列的平均值

* 预测每个系列的每日小时的平均值

### 预测每个系列的平均值

第一步是实现一个通用函数,用于为每个块进行预测。

该函数获取测试集的训练数据集和输入列(块 ID,块中的位置和小时),并返回具有 _[块] [变量] [时间]的预期 3D 格式的所有块的预测 _。

该函数枚举预测中的块,然后枚举 39 个目标列,调用另一个名为 _forecast_variable()_ 的新函数,以便对给定目标变量的每个提前期进行预测。

完整的功能如下所列。

```py

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

```

我们现在可以实现 _forecast_variable()_ 的一个版本,该版本计算给定系列的平均值,并预测每个提前期的平均值。

首先,我们必须在所有块中收集目标列中的所有观测值,然后计算观测值的平均值,同时忽略 NaN 值。 _nanmean()_ NumPy 函数将计算阵列的平均值并忽略 _NaN_ 值。

下面的 _forecast_variable()_ 函数实现了这种行为。

```py

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# collect obs from all chunks

all_obs = list()

for chunk in train_chunks:

all_obs += [x for x in chunk[:, col_ix]]

# return the average, ignoring nan

value = nanmean(all_obs)

return [value for _ in lead_times]

```

我们现在拥有我们需要的一切。

下面列出了在所有预测提前期内预测每个系列的全局均值的完整示例。

```py

# forecast global mean

from numpy import loadtxt

from numpy import nan

from numpy import isnan

from numpy import count_nonzero

from numpy import unique

from numpy import array

from numpy import nanmean

from matplotlib import pyplot

# split the dataset by 'chunkID', return a list of chunks

def to_chunks(values, chunk_ix=0):

chunks = list()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks.append(values[selection, :])

return chunks

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2 ,3, 4, 5, 10, 17, 24, 48, 72]

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# collect obs from all chunks

all_obs = list()

for chunk in train_chunks:

all_obs += [x for x in chunk[:, col_ix]]

# return the average, ignoring nan

value = nanmean(all_obs)

return [value for _ in lead_times]

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

# load dataset

train = loadtxt('AirQualityPrediction/naive_train.csv', delimiter=',')

test = loadtxt('AirQualityPrediction/naive_test.csv', delimiter=',')

# group data by chunks

train_chunks = to_chunks(train)

test_chunks = to_chunks(test)

# forecast

test_input = [rows[:, :3] for rows in test_chunks]

forecast = forecast_chunks(train_chunks, test_input)

# evaluate forecast

actual = prepare_test_forecasts(test_chunks)

total_mae, times_mae = evaluate_forecasts(forecast, actual)

# summarize forecast

summarize_error('Global Mean', total_mae, times_mae)

```

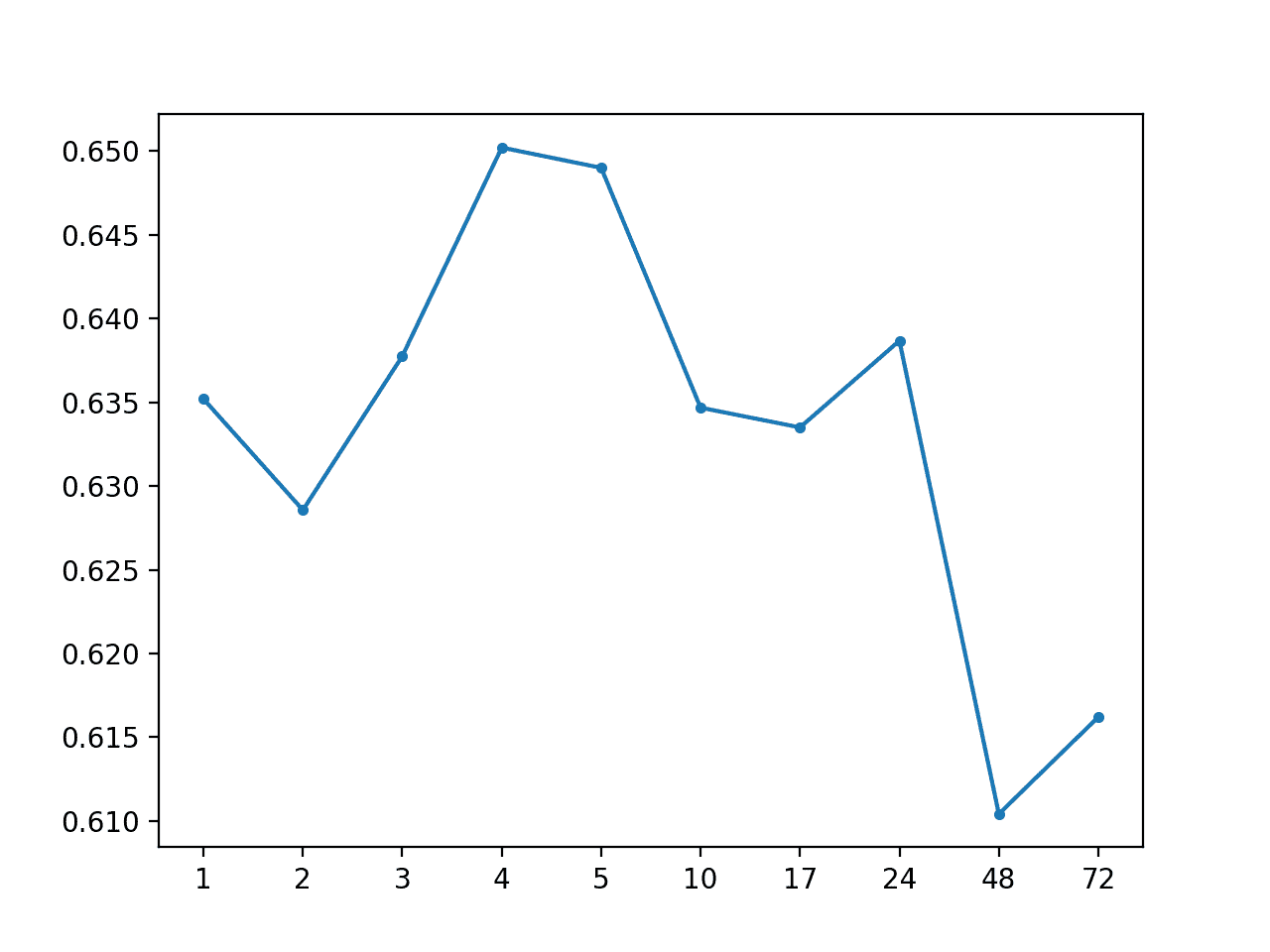

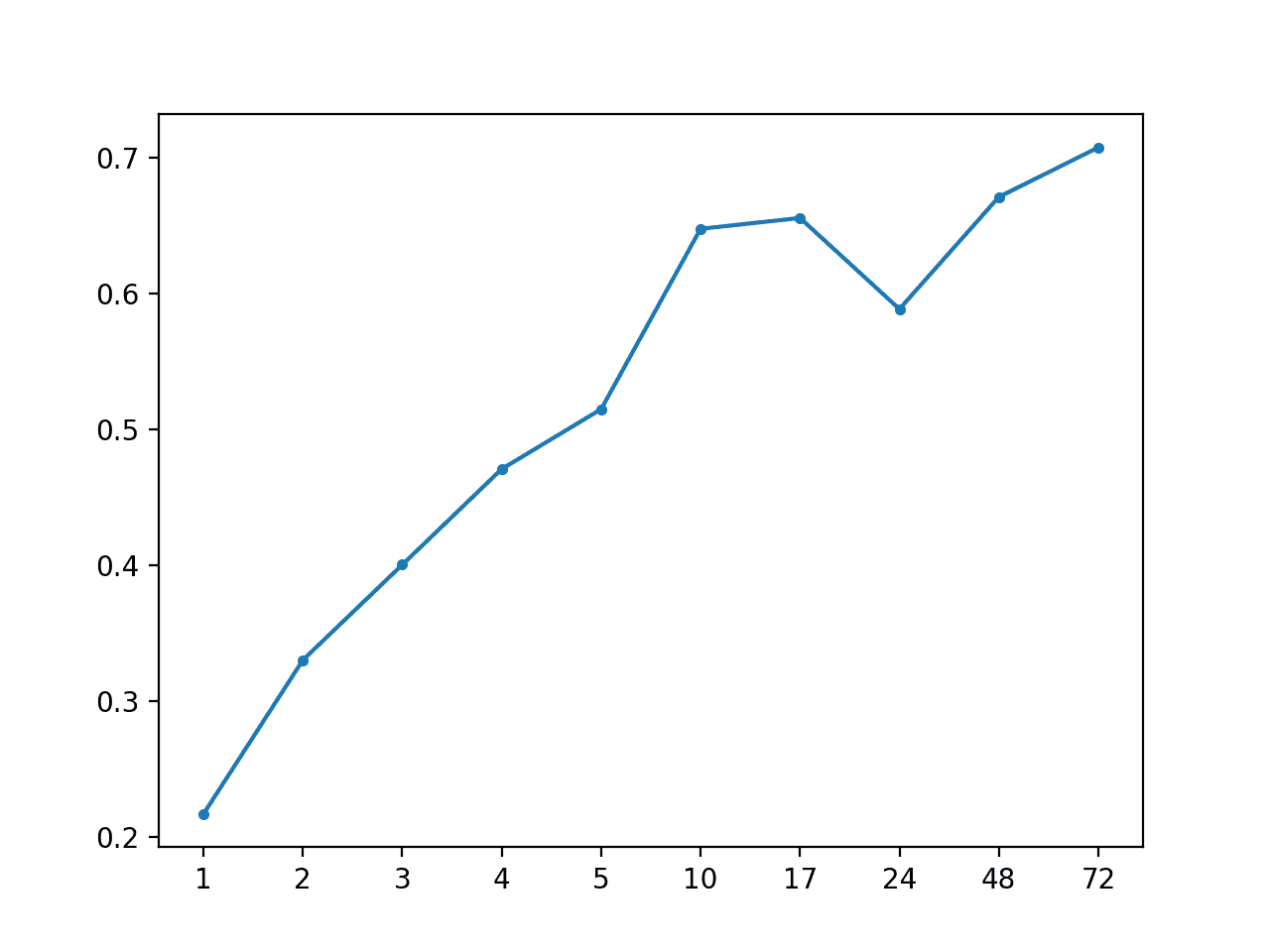

首先运行该示例打印的总体 MAE 为 0.634,然后是每个预测提前期的 MAE 分数。

```py

# Global Mean: [0.634 MAE] +1 0.635, +2 0.629, +3 0.638, +4 0.650, +5 0.649, +10 0.635, +17 0.634, +24 0.641, +48 0.613, +72 0.618

```

创建一个线图,显示每个预测前置时间的 MAE 分数,从+1 小时到+72 小时。

我们无法看到预测前置时间与预测错误有任何明显的关系,正如我们对更熟练的模型所期望的那样。

MAE 按预测带领全球均值时间

我们可以更新示例来预测全局中位数而不是平均值。

考虑到数据似乎显示的非高斯分布,中值可能比使用该数据的均值更有意义地用作集中趋势。

NumPy 提供 _nanmedian()_ 功能,我们可以在 _forecast_variable()_ 函数中代替 _nanmean()_。

完整更新的示例如下所示。

```py

# forecast global median

from numpy import loadtxt

from numpy import nan

from numpy import isnan

from numpy import count_nonzero

from numpy import unique

from numpy import array

from numpy import nanmedian

from matplotlib import pyplot

# split the dataset by 'chunkID', return a list of chunks

def to_chunks(values, chunk_ix=0):

chunks = list()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks.append(values[selection, :])

return chunks

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2 ,3, 4, 5, 10, 17, 24, 48, 72]

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# collect obs from all chunks

all_obs = list()

for chunk in train_chunks:

all_obs += [x for x in chunk[:, col_ix]]

# return the average, ignoring nan

value = nanmedian(all_obs)

return [value for _ in lead_times]

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

# load dataset

train = loadtxt('AirQualityPrediction/naive_train.csv', delimiter=',')

test = loadtxt('AirQualityPrediction/naive_test.csv', delimiter=',')

# group data by chunks

train_chunks = to_chunks(train)

test_chunks = to_chunks(test)

# forecast

test_input = [rows[:, :3] for rows in test_chunks]

forecast = forecast_chunks(train_chunks, test_input)

# evaluate forecast

actual = prepare_test_forecasts(test_chunks)

total_mae, times_mae = evaluate_forecasts(forecast, actual)

# summarize forecast

summarize_error('Global Median', total_mae, times_mae)

```

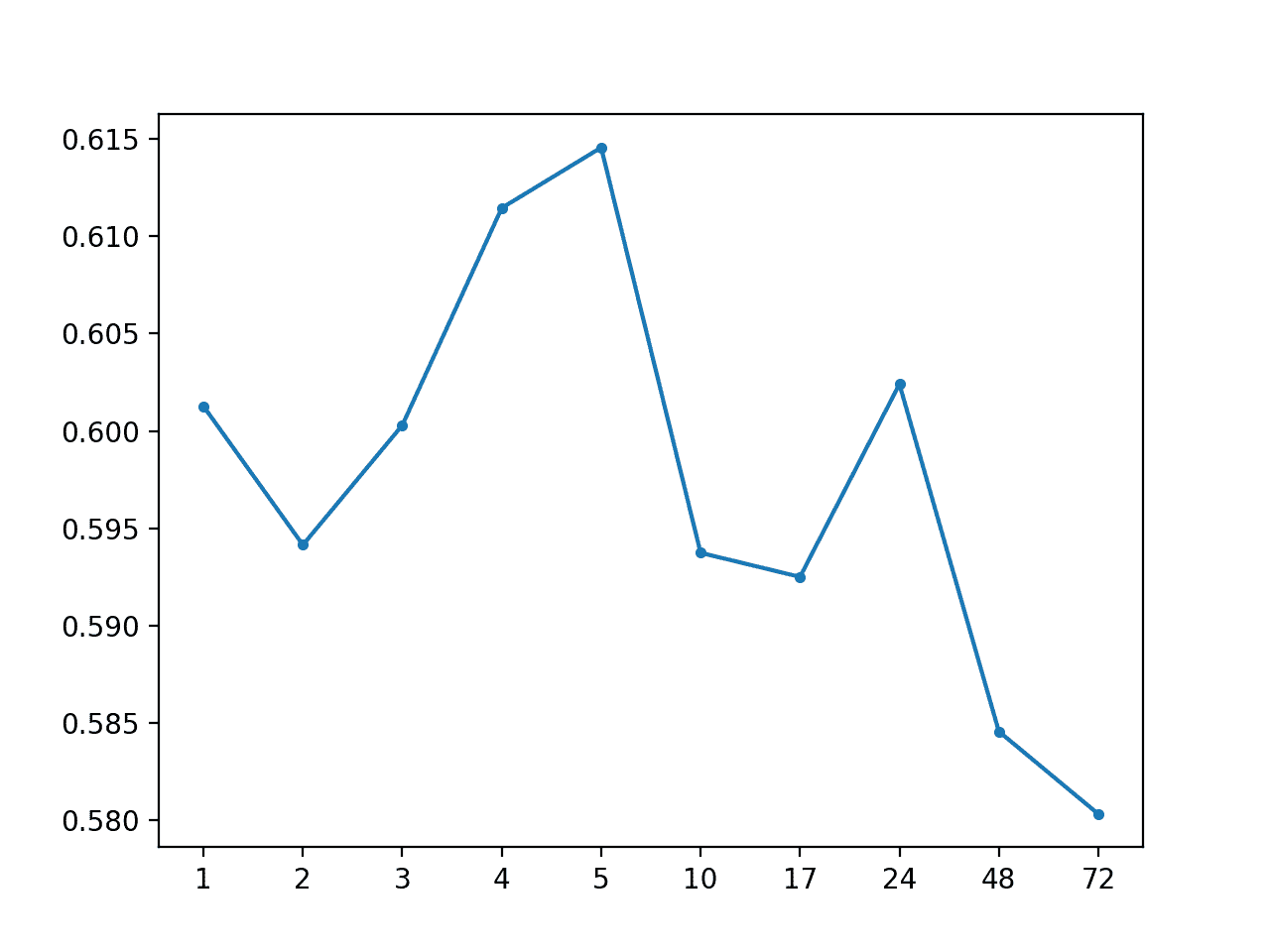

运行该示例显示 MAE 下降至约 0.59,表明确实使用中位数作为集中趋势可能是更好的基线策略。

```py

Global Median: [0.598 MAE] +1 0.601, +2 0.594, +3 0.600, +4 0.611, +5 0.615, +10 0.594, +17 0.592, +24 0.602, +48 0.585, +72 0.580

```

还创建了每个提前期 MAE 的线图。

MAE 预测带领全球中位数的时间

### 预测每个系列的每日小时的平均值

我们可以通过系列更新用于计算集中趋势的朴素模型,以仅包括与预测提前期具有相同时段的行。

例如,如果+1 提前时间具有小时 6(例如 0600 或 6AM),那么我们可以在该小时的所有块中找到训练数据集中的所有其他行,并从这些行计算给定目标变量的中值。 。

我们在测试数据集中记录一天中的小时数,并在进行预测时将其提供给模型。一个问题是,在某些情况下,测试数据集没有给定提前期的记录,而且必须用 _NaN_ 值发明一个,包括小时的 _NaN_ 值。在这些情况下,不需要预测,因此我们将跳过它们并预测 _NaN_ 值。

下面的 _forecast_variable()_ 函数实现了这种行为,返回给定变量的每个提前期的预测。

效率不高,首先为每个变量预先计算每小时的中值,然后使用查找表进行预测可能会更有效。此时效率不是问题,因为我们正在寻找模型表现的基线。

```py

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

forecast = list()

# convert target number into column number

col_ix = 3 + target_ix

# enumerate lead times

for i in range(len(lead_times)):

# get the hour for this forecast lead time

hour = chunk_test[i, 2]

# check for no test data

if isnan(hour):

forecast.append(nan)

continue

# get all rows in training for this hour

all_rows = list()

for rows in train_chunks:

[all_rows.append(row) for row in rows[rows[:,2]==hour]]

# calculate the central tendency for target

all_rows = array(all_rows)

value = nanmedian(all_rows[:, col_ix])

forecast.append(value)

return forecast

```

下面列出了按一天中的小时预测全球中值的完整示例。

```py

# forecast global median by hour of day

from numpy import loadtxt

from numpy import nan

from numpy import isnan

from numpy import count_nonzero

from numpy import unique

from numpy import array

from numpy import nanmedian

from matplotlib import pyplot

# split the dataset by 'chunkID', return a list of chunks

def to_chunks(values, chunk_ix=0):

chunks = list()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks.append(values[selection, :])

return chunks

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2, 3, 4, 5, 10, 17, 24, 48, 72]

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

forecast = list()

# convert target number into column number

col_ix = 3 + target_ix

# enumerate lead times

for i in range(len(lead_times)):

# get the hour for this forecast lead time

hour = chunk_test[i, 2]

# check for no test data

if isnan(hour):

forecast.append(nan)

continue

# get all rows in training for this hour

all_rows = list()

for rows in train_chunks:

[all_rows.append(row) for row in rows[rows[:,2]==hour]]

# calculate the central tendency for target

all_rows = array(all_rows)

value = nanmedian(all_rows[:, col_ix])

forecast.append(value)

return forecast

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

# load dataset

train = loadtxt('AirQualityPrediction/naive_train.csv', delimiter=',')

test = loadtxt('AirQualityPrediction/naive_test.csv', delimiter=',')

# group data by chunks

train_chunks = to_chunks(train)

test_chunks = to_chunks(test)

# forecast

test_input = [rows[:, :3] for rows in test_chunks]

forecast = forecast_chunks(train_chunks, test_input)

# evaluate forecast

actual = prepare_test_forecasts(test_chunks)

total_mae, times_mae = evaluate_forecasts(forecast, actual)

# summarize forecast

summarize_error('Global Median by Hour', total_mae, times_mae)

```

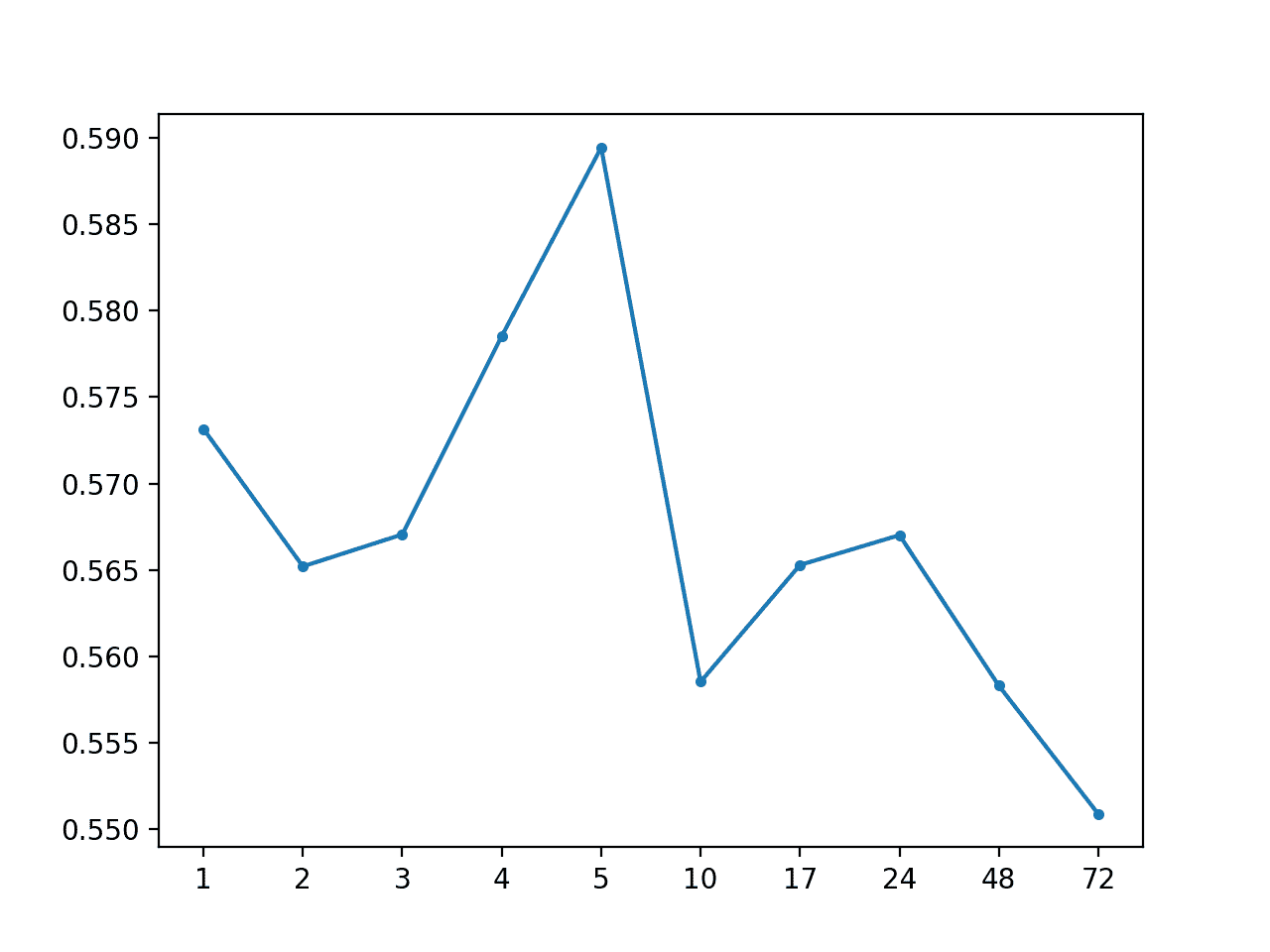

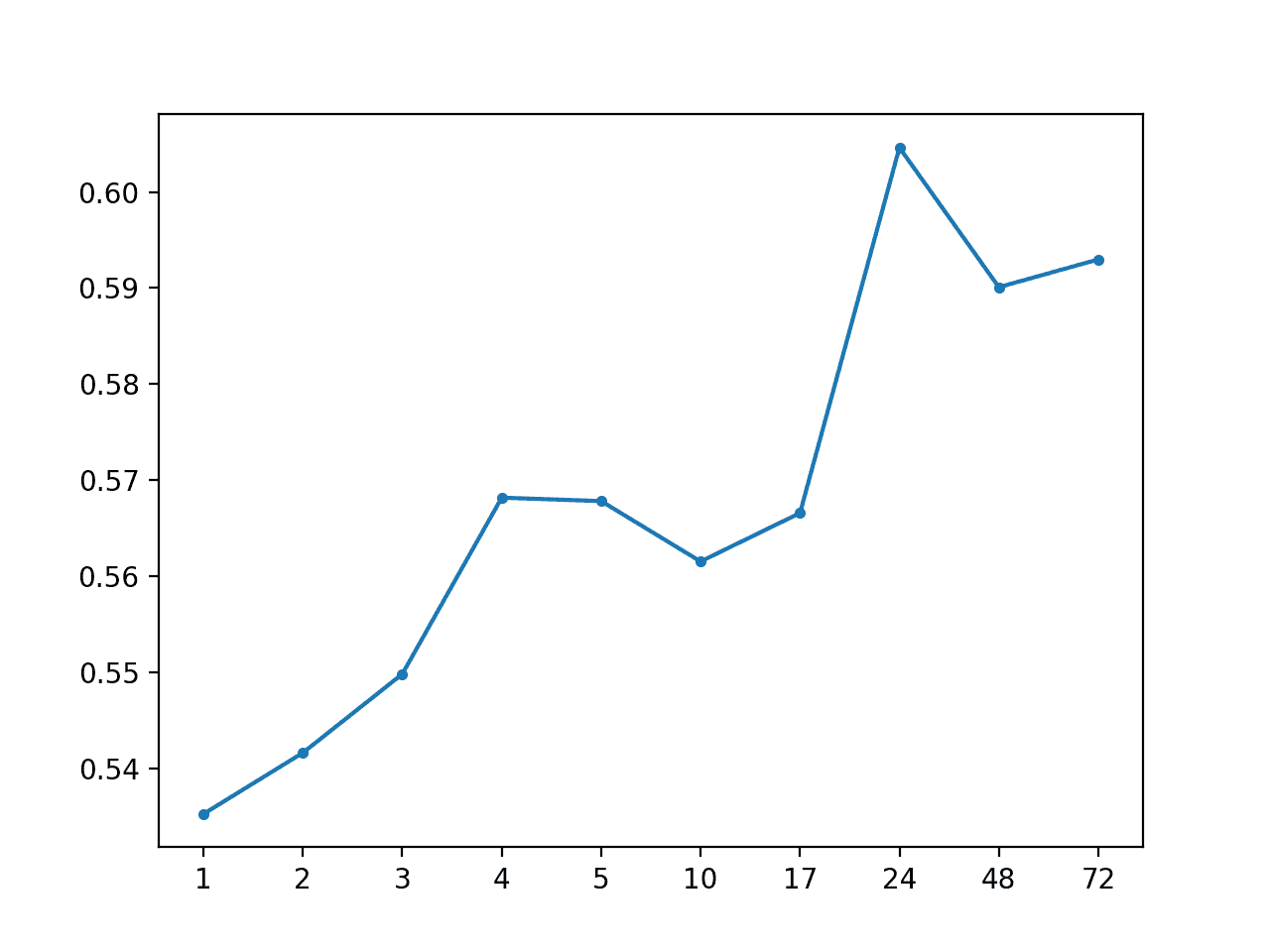

运行该示例总结了模型的表现,MAE 为 0.567,这是对每个系列的全局中位数的改进。

```py

Global Median by Hour: [0.567 MAE] +1 0.573, +2 0.565, +3 0.567, +4 0.579, +5 0.589, +10 0.559, +17 0.565, +24 0.567, +48 0.558, +72 0.551

```

还创建了预测提前期 MAE 的线图,显示+72 具有最低的总体预测误差。这很有趣,并且可能表明基于小时的信息在更复杂的模型中可能是有用的。

MAE 按预测带领时间以全球中位数按天计算

## 大块朴素的方法

使用特定于块的信息可能比使用来自整个训练数据集的全局信息具有更多的预测能力。

我们可以通过三种本地或块特定的朴素预测方法来探索这个问题;他们是:

* 预测每个系列的最后观察

* 预测每个系列的平均值

* 预测每个系列的每日小时的平均值

最后两个是在上一节中评估的全局策略的块特定版本。

### 预测每个系列的最后观察

预测块的最后一次非 NaN 观察可能是最简单的模型,通常称为持久性模型或朴素模型。

下面的 _forecast_variable()_ 函数实现了此预测策略。

```py

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# extract the history for the series

history = chunk_train[:, col_ix]

# persist a nan if we do not find any valid data

persisted = nan

# enumerate history in verse order looking for the first non-nan

for value in reversed(history):

if not isnan(value):

persisted = value

break

# persist the same value for all lead times

forecast = [persisted for _ in range(len(lead_times))]

return forecast

```

下面列出了评估测试集上持久性预测策略的完整示例。

```py

# persist last observation

from numpy import loadtxt

from numpy import nan

from numpy import isnan

from numpy import count_nonzero

from numpy import unique

from numpy import array

from numpy import nanmedian

from matplotlib import pyplot

# split the dataset by 'chunkID', return a list of chunks

def to_chunks(values, chunk_ix=0):

chunks = list()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks.append(values[selection, :])

return chunks

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2, 3, 4, 5, 10, 17, 24, 48, 72]

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# extract the history for the series

history = chunk_train[:, col_ix]

# persist a nan if we do not find any valid data

persisted = nan

# enumerate history in verse order looking for the first non-nan

for value in reversed(history):

if not isnan(value):

persisted = value

break

# persist the same value for all lead times

forecast = [persisted for _ in range(len(lead_times))]

return forecast

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

# load dataset

train = loadtxt('AirQualityPrediction/naive_train.csv', delimiter=',')

test = loadtxt('AirQualityPrediction/naive_test.csv', delimiter=',')

# group data by chunks

train_chunks = to_chunks(train)

test_chunks = to_chunks(test)

# forecast

test_input = [rows[:, :3] for rows in test_chunks]

forecast = forecast_chunks(train_chunks, test_input)

# evaluate forecast

actual = prepare_test_forecasts(test_chunks)

total_mae, times_mae = evaluate_forecasts(forecast, actual)

# summarize forecast

summarize_error('Persistence', total_mae, times_mae)

```

运行该示例将按预测提前期打印整体 MAE 和 MAE。

我们可以看到,持久性预测似乎胜过上一节中评估的所有全局策略。

这增加了一些支持,即合理假设特定于块的信息在建模此问题时很重要。

```py

Persistence: [0.520 MAE] +1 0.217, +2 0.330, +3 0.400, +4 0.471, +5 0.515, +10 0.648, +17 0.656, +24 0.589, +48 0.671, +72 0.708

```

创建每个预测提前期的 MAE 线图。

重要的是,该图显示了随着预测提前期的增加而增加误差的预期行为。也就是说,进一步预测到未来,它越具挑战性,反过来,预期会产生越多的错误。

MAE 通过持续性预测提前期

### 预测每个系列的平均值

我们可以仅使用块中的数据来保持系列的平均值,而不是持续系列的最后一次观察。

具体来说,我们可以计算出系列的中位数,正如我们在上一节中发现的那样,似乎可以带来更好的表现。

_forecast_variable()_ 实现了这种本地策略。

```py

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# extract the history for the series

history = chunk_train[:, col_ix]

# calculate the central tendency

value = nanmedian(history)

# persist the same value for all lead times

forecast = [value for _ in range(len(lead_times))]

return forecast

```

下面列出了完整的示例。

```py

# forecast local median

from numpy import loadtxt

from numpy import nan

from numpy import isnan

from numpy import count_nonzero

from numpy import unique

from numpy import array

from numpy import nanmedian

from matplotlib import pyplot

# split the dataset by 'chunkID', return a list of chunks

def to_chunks(values, chunk_ix=0):

chunks = list()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks.append(values[selection, :])

return chunks

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2, 3, 4, 5, 10, 17, 24, 48, 72]

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

# convert target number into column number

col_ix = 3 + target_ix

# extract the history for the series

history = chunk_train[:, col_ix]

# calculate the central tendency

value = nanmedian(history)

# persist the same value for all lead times

forecast = [value for _ in range(len(lead_times))]

return forecast

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

# load dataset

train = loadtxt('AirQualityPrediction/naive_train.csv', delimiter=',')

test = loadtxt('AirQualityPrediction/naive_test.csv', delimiter=',')

# group data by chunks

train_chunks = to_chunks(train)

test_chunks = to_chunks(test)

# forecast

test_input = [rows[:, :3] for rows in test_chunks]

forecast = forecast_chunks(train_chunks, test_input)

# evaluate forecast

actual = prepare_test_forecasts(test_chunks)

total_mae, times_mae = evaluate_forecasts(forecast, actual)

# summarize forecast

summarize_error('Local Median', total_mae, times_mae)

```

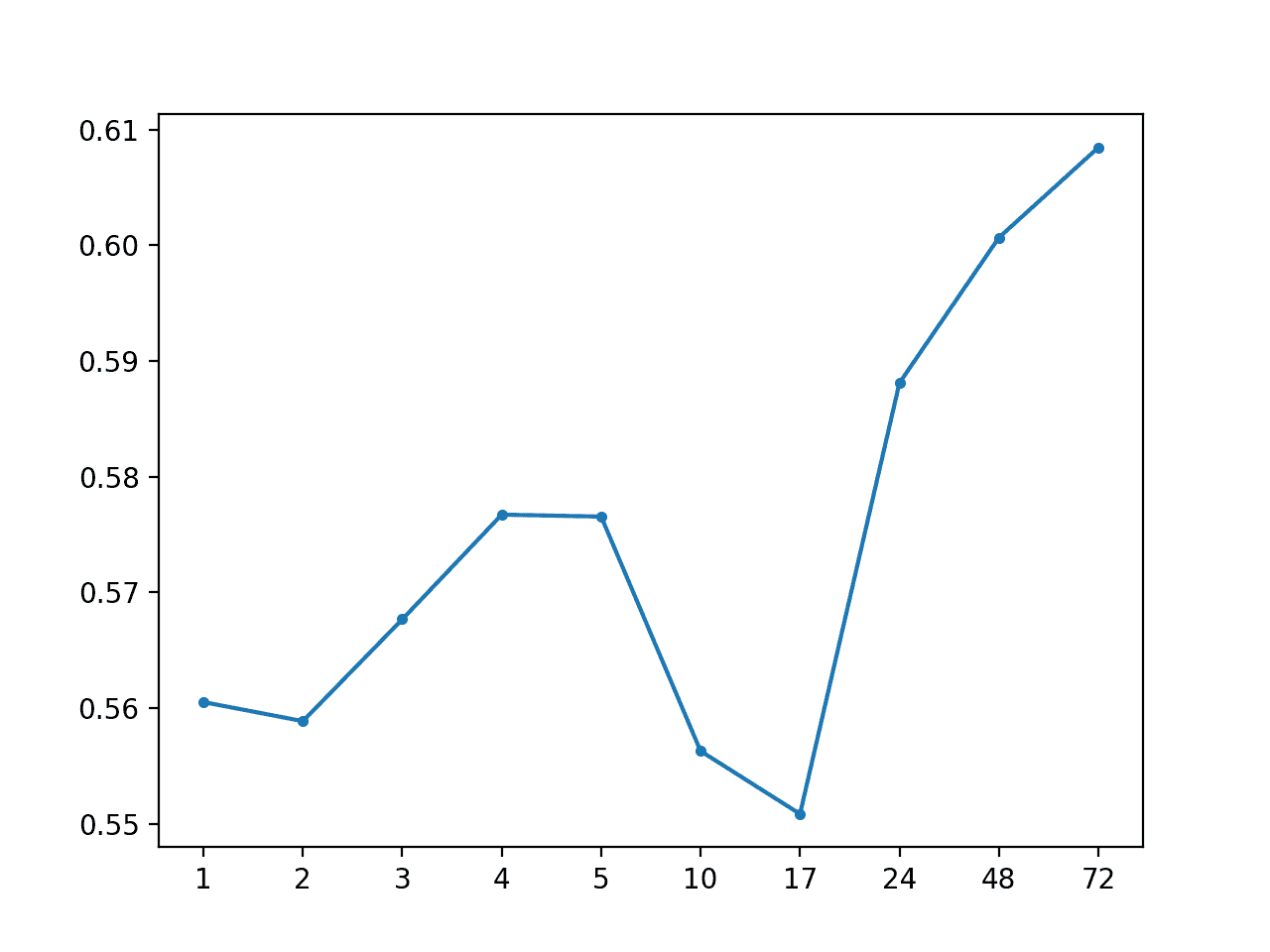

运行该示例总结了这种朴素策略的表现,显示了大约 0.568 的 MAE,这比上述持久性策略更糟糕。

```py

Local Median: [0.568 MAE] +1 0.535, +2 0.542, +3 0.550, +4 0.568, +5 0.568, +10 0.562, +17 0.567, +24 0.605, +48 0.590, +72 0.593

```

还创建了每个预测提前期的 MAE 线图,显示了每个提前期的常见误差增加曲线。

MAE by Forecast Lead Time via Local Median

### 预测每个系列的每日小时的平均值

最后,我们可以使用每个预测提前期特定时段的每个系列的平均值来拨入持久性策略。

发现这种方法在全球战略中是有效的。尽管存在使用更小数据样本的风险,但仅使用来自块的数据可能是有效的。

下面的 _forecast_variable()_ 函数实现了这个策略,首先查找具有预测提前期小时的所有行,然后计算给定目标变量的那些行的中值。

```py

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

forecast = list()

# convert target number into column number

col_ix = 3 + target_ix

# enumerate lead times

for i in range(len(lead_times)):

# get the hour for this forecast lead time

hour = chunk_test[i, 2]

# check for no test data

if isnan(hour):

forecast.append(nan)

continue

# select rows in chunk with this hour

selected = chunk_train[chunk_train[:,2]==hour]

# calculate the central tendency for target

value = nanmedian(selected[:, col_ix])

forecast.append(value)

return forecast

```

下面列出了完整的示例。

```py

# forecast local median per hour of day

from numpy import loadtxt

from numpy import nan

from numpy import isnan

from numpy import unique

from numpy import array

from numpy import nanmedian

from matplotlib import pyplot

# split the dataset by 'chunkID', return a list of chunks

def to_chunks(values, chunk_ix=0):

chunks = list()

# get the unique chunk ids

chunk_ids = unique(values[:, chunk_ix])

# group rows by chunk id

for chunk_id in chunk_ids:

selection = values[:, chunk_ix] == chunk_id

chunks.append(values[selection, :])

return chunks

# return a list of relative forecast lead times

def get_lead_times():

return [1, 2, 3, 4, 5, 10, 17, 24, 48, 72]

# forecast all lead times for one variable

def forecast_variable(train_chunks, chunk_train, chunk_test, lead_times, target_ix):

forecast = list()

# convert target number into column number

col_ix = 3 + target_ix

# enumerate lead times

for i in range(len(lead_times)):

# get the hour for this forecast lead time

hour = chunk_test[i, 2]

# check for no test data

if isnan(hour):

forecast.append(nan)

continue

# select rows in chunk with this hour

selected = chunk_train[chunk_train[:,2]==hour]

# calculate the central tendency for target

value = nanmedian(selected[:, col_ix])

forecast.append(value)

return forecast

# forecast for each chunk, returns [chunk][variable][time]

def forecast_chunks(train_chunks, test_input):

lead_times = get_lead_times()

predictions = list()

# enumerate chunks to forecast

for i in range(len(train_chunks)):

# enumerate targets for chunk

chunk_predictions = list()

for j in range(39):

yhat = forecast_variable(train_chunks, train_chunks[i], test_input[i], lead_times, j)

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# convert the test dataset in chunks to [chunk][variable][time] format

def prepare_test_forecasts(test_chunks):

predictions = list()

# enumerate chunks to forecast

for rows in test_chunks:

# enumerate targets for chunk

chunk_predictions = list()

for j in range(3, rows.shape[1]):

yhat = rows[:, j]

chunk_predictions.append(yhat)

chunk_predictions = array(chunk_predictions)

predictions.append(chunk_predictions)

return array(predictions)

# calculate the error between an actual and predicted value

def calculate_error(actual, predicted):

# give the full actual value if predicted is nan

if isnan(predicted):

return abs(actual)

# calculate abs difference

return abs(actual - predicted)

# evaluate a forecast in the format [chunk][variable][time]

def evaluate_forecasts(predictions, testset):

lead_times = get_lead_times()

total_mae, times_mae = 0.0, [0.0 for _ in range(len(lead_times))]

total_c, times_c = 0, [0 for _ in range(len(lead_times))]

# enumerate test chunks

for i in range(len(test_chunks)):

# convert to forecasts

actual = testset[i]

predicted = predictions[i]

# enumerate target variables

for j in range(predicted.shape[0]):

# enumerate lead times

for k in range(len(lead_times)):

# skip if actual in nan

if isnan(actual[j, k]):

continue

# calculate error

error = calculate_error(actual[j, k], predicted[j, k])

# update statistics

total_mae += error

times_mae[k] += error

total_c += 1

times_c[k] += 1

# normalize summed absolute errors

total_mae /= total_c

times_mae = [times_mae[i]/times_c[i] for i in range(len(times_mae))]

return total_mae, times_mae

# summarize scores

def summarize_error(name, total_mae, times_mae):

# print summary

lead_times = get_lead_times()

formatted = ['+%d %.3f' % (lead_times[i], times_mae[i]) for i in range(len(lead_times))]

s_scores = ', '.join(formatted)

print('%s: [%.3f MAE] %s' % (name, total_mae, s_scores))

# plot summary

pyplot.plot([str(x) for x in lead_times], times_mae, marker='.')

pyplot.show()

# load dataset

train = loadtxt('AirQualityPrediction/naive_train.csv', delimiter=',')

test = loadtxt('AirQualityPrediction/naive_test.csv', delimiter=',')

# group data by chunks

train_chunks = to_chunks(train)

test_chunks = to_chunks(test)

# forecast

test_input = [rows[:, :3] for rows in test_chunks]

forecast = forecast_chunks(train_chunks, test_input)

# evaluate forecast

actual = prepare_test_forecasts(test_chunks)

total_mae, times_mae = evaluate_forecasts(forecast, actual)

# summarize forecast

summarize_error('Local Median by Hour', total_mae, times_mae)

```

运行该示例打印的总体 MAE 约为 0.574,这比同一策略的全局变化更差。

如所怀疑的那样,这可能是由于样本量很小,最多五行训练数据对每个预测都有贡献。

```py

Local Median by Hour: [0.574 MAE] +1 0.561, +2 0.559, +3 0.568, +4 0.577, +5 0.577, +10 0.556, +17 0.551, +24 0.588, +48 0.601, +72 0.608

```

还创建了每个预测提前期的 MAE 线图,显示了每个提前期的常见误差增加曲线。

MAE 按预测提前时间按当地中位数按小时计算

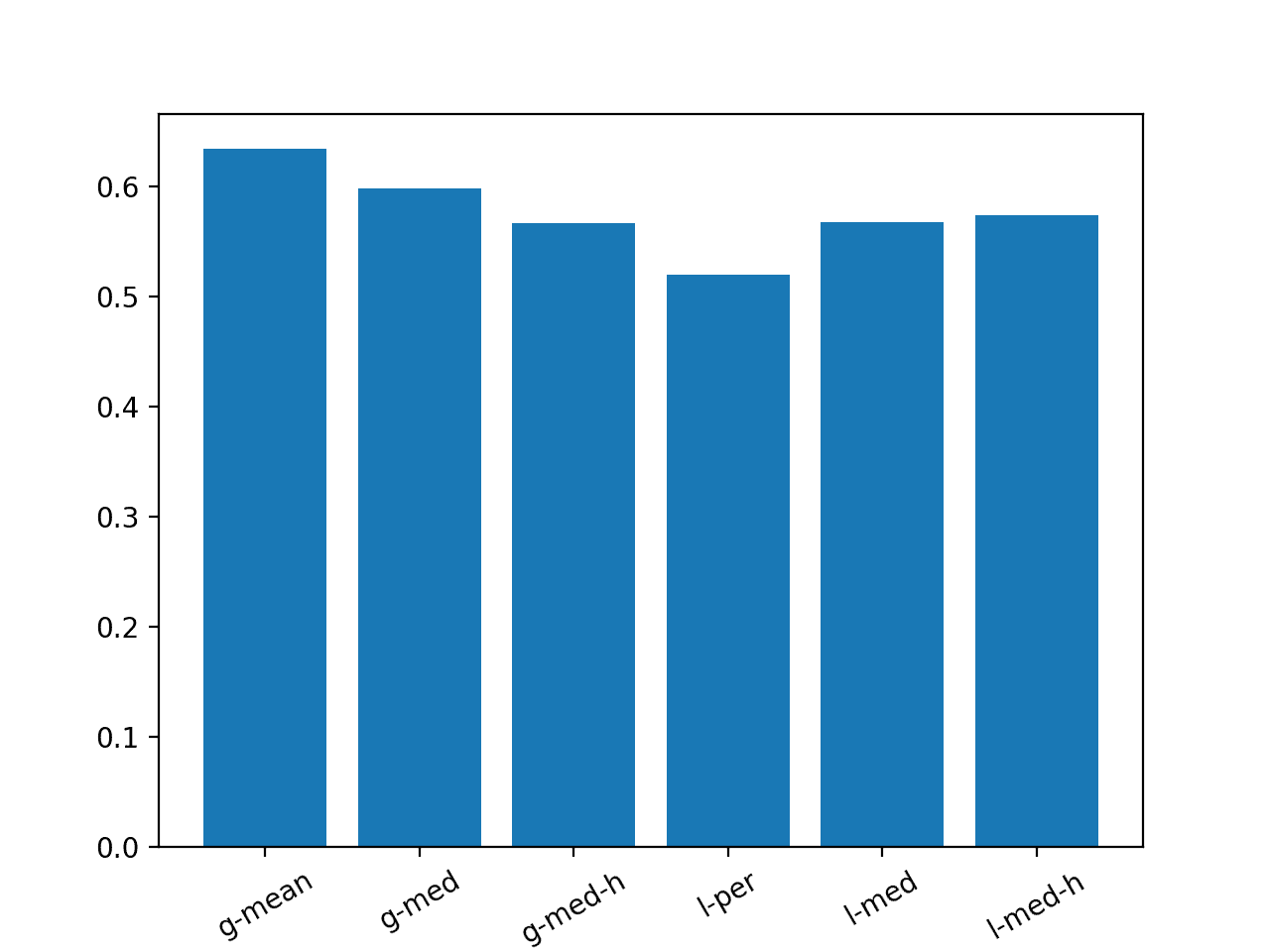

## 结果摘要

我们可以总结本教程中审查的所有朴素预测方法的表现。

下面的例子列出了每个小时的' _g_ '和' _l_ '用于全局和本地以及' _h_ '用于小时的每种方法变化。该示例创建了一个条形图,以便我们可以根据它们的相对表现来比较朴素的策略。

```py

# summary of results

from matplotlib import pyplot

# results

results = {

'g-mean':0.634,

'g-med':0.598,

'g-med-h':0.567,

'l-per':0.520,

'l-med':0.568,

'l-med-h':0.574}

# plot

pyplot.bar(results.keys(), results.values())

locs, labels = pyplot.xticks()

pyplot.setp(labels, rotation=30)

pyplot.show()

```

运行该示例会创建一个条形图,比较六种策略中每种策略的 MAE。

我们可以看到持久性策略优于所有其他方法,并且第二个最佳策略是使用一小时的每个系列的全局中位数。

在该训练/测试分离数据集上评估的模型必须达到低于 0.520 的总体 MAE 才能被认为是熟练的。

带有朴素预测方法概要的条形图

## 扩展

本节列出了一些扩展您可能希望探索的教程的想法。

* **跨站点朴素预测**。制定一个朴素的预测策略,该策略使用跨站点的每个变量的信息,例如:不同站点的同一变量的不同目标变量。

* **混合方法**。制定混合预测策略,该策略结合了本教程中描述的两个或更多朴素预测策略的元素。

* **朴素方法的集合**。制定集合预测策略,创建本教程中描述的两个或更多预测策略的线性组合。

如果你探索任何这些扩展,我很想知道。

## 进一步阅读

如果您希望深入了解,本节将提供有关该主题的更多资源。

### 帖子

* [标准多变量,多步骤和多站点时间序列预测问题](https://machinelearningmastery.com/standard-multivariate-multi-step-multi-site-time-series-forecasting-problem/)

* [如何使用 Python 进行时间序列预测的基线预测](https://machinelearningmastery.com/persistence-time-series-forecasting-with-python/)

### 用品

* [EMC 数据科学全球黑客马拉松(空气质量预测)](https://www.kaggle.com/c/dsg-hackathon/data)

* [将所有东西放入随机森林:Ben Hamner 赢得空气质量预测黑客马拉松](http://blog.kaggle.com/2012/05/01/chucking-everything-into-a-random-forest-ben-hamner-on-winning-the-air-quality-prediction-hackathon/)

* [EMC 数据科学全球黑客马拉松(空气质量预测)的获奖代码](https://github.com/benhamner/Air-Quality-Prediction-Hackathon-Winning-Model)

* [分区模型的一般方法?](https://www.kaggle.com/c/dsg-hackathon/discussion/1821)

## 摘要

在本教程中,您了解了如何为多步骤多变量空气污染时间序列预测问题开发朴素的预测方法。

具体来说,你学到了:

* 如何开发用于评估大气污染数据集预测策略的测试工具。

* 如何开发使用整个训练数据集中的数据的全球朴素预测策略。

* 如何开发使用来自预测的特定区间的数据的本地朴素预测策略。

你有任何问题吗?

在下面的评论中提出您的问题,我会尽力回答。

- Machine Learning Mastery 应用机器学习教程

- 5竞争机器学习的好处

- 过度拟合的简单直觉,或者为什么测试训练数据是一个坏主意

- 特征选择简介

- 应用机器学习作为一个搜索问题的温和介绍

- 为什么应用机器学习很难

- 为什么我的结果不如我想的那么好?你可能过度拟合了

- 用ROC曲线评估和比较分类器表现

- BigML评论:发现本机学习即服务平台的聪明功能

- BigML教程:开发您的第一个决策树并进行预测

- 构建生产机器学习基础设施

- 分类准确性不够:可以使用更多表现测量

- 一种预测模型的巧妙应用

- 机器学习项目中常见的陷阱

- 数据清理:将凌乱的数据转换为整洁的数据

- 机器学习中的数据泄漏

- 数据,学习和建模

- 数据管理至关重要以及为什么需要认真对待它

- 将预测模型部署到生产中

- 参数和超参数之间有什么区别?

- 测试和验证数据集之间有什么区别?

- 发现特征工程,如何设计特征以及如何获得它

- 如何开始使用Kaggle

- 超越预测

- 如何在评估机器学习算法时选择正确的测试选项

- 如何定义机器学习问题

- 如何评估机器学习算法

- 如何获得基线结果及其重要性

- 如何充分利用机器学习数据

- 如何识别数据中的异常值

- 如何提高机器学习效果

- 如何在竞争机器学习中踢屁股

- 如何知道您的机器学习模型是否具有良好的表现

- 如何布局和管理您的机器学习项目

- 如何为机器学习准备数据

- 如何减少最终机器学习模型中的方差

- 如何使用机器学习结果

- 如何解决像数据科学家这样的问题

- 通过数据预处理提高模型精度

- 处理机器学习的大数据文件的7种方法

- 建立机器学习系统的经验教训

- 如何使用机器学习清单可靠地获得准确的预测(即使您是初学者)

- 机器学习模型运行期间要做什么

- 机器学习表现改进备忘单

- 来自世界级从业者的机器学习技巧:Phil Brierley

- 模型预测精度与机器学习中的解释

- 竞争机器学习的模型选择技巧

- 机器学习需要多少训练数据?

- 如何系统地规划和运行机器学习实验

- 应用机器学习过程

- 默认情况下可重现的机器学习结果

- 10个实践应用机器学习的标准数据集

- 简单的三步法到最佳机器学习算法

- 打击机器学习数据集中不平衡类的8种策略

- 模型表现不匹配问题(以及如何处理)

- 黑箱机器学习的诱惑陷阱

- 如何培养最终的机器学习模型

- 使用探索性数据分析了解您的问题并获得更好的结果

- 什么是数据挖掘和KDD

- 为什么One-Hot在机器学习中编码数据?

- 为什么你应该在你的机器学习问题上进行抽样检查算法

- 所以,你正在研究机器学习问题......

- Machine Learning Mastery Keras 深度学习教程

- Keras 中神经网络模型的 5 步生命周期

- 在 Python 迷你课程中应用深度学习

- Keras 深度学习库的二元分类教程

- 如何用 Keras 构建多层感知器神经网络模型

- 如何在 Keras 中检查深度学习模型

- 10 个用于 Amazon Web Services 深度学习的命令行秘籍

- 机器学习卷积神经网络的速成课程

- 如何在 Python 中使用 Keras 进行深度学习的度量

- 深度学习书籍

- 深度学习课程

- 你所知道的深度学习是一种谎言

- 如何设置 Amazon AWS EC2 GPU 以训练 Keras 深度学习模型(分步)

- 神经网络中批量和迭代之间的区别是什么?

- 在 Keras 展示深度学习模型训练历史

- 基于 Keras 的深度学习模型中的dropout正则化

- 评估 Keras 中深度学习模型的表现

- 如何评价深度学习模型的技巧

- 小批量梯度下降的简要介绍以及如何配置批量大小

- 在 Keras 中获得深度学习帮助的 9 种方法

- 如何使用 Keras 在 Python 中网格搜索深度学习模型的超参数

- 用 Keras 在 Python 中使用卷积神经网络进行手写数字识别

- 如何用 Keras 进行预测

- 用 Keras 进行深度学习的图像增强

- 8 个深度学习的鼓舞人心的应用

- Python 深度学习库 Keras 简介

- Python 深度学习库 TensorFlow 简介

- Python 深度学习库 Theano 简介

- 如何使用 Keras 函数式 API 进行深度学习

- Keras 深度学习库的多类分类教程

- 多层感知器神经网络速成课程

- 基于卷积神经网络的 Keras 深度学习库中的目标识别

- 流行的深度学习库

- 用深度学习预测电影评论的情感

- Python 中的 Keras 深度学习库的回归教程

- 如何使用 Keras 获得可重现的结果

- 如何在 Linux 服务器上运行深度学习实验

- 保存并加载您的 Keras 深度学习模型

- 用 Keras 逐步开发 Python 中的第一个神经网络

- 用 Keras 理解 Python 中的有状态 LSTM 循环神经网络

- 在 Python 中使用 Keras 深度学习模型和 Scikit-Learn

- 如何使用预训练的 VGG 模型对照片中的物体进行分类

- 在 Python 和 Keras 中对深度学习模型使用学习率调度

- 如何在 Keras 中可视化深度学习神经网络模型

- 什么是深度学习?

- 何时使用 MLP,CNN 和 RNN 神经网络

- 为什么用随机权重初始化神经网络?

- Machine Learning Mastery 深度学习 NLP 教程

- 深度学习在自然语言处理中的 7 个应用

- 如何实现自然语言处理的波束搜索解码器

- 深度学习文档分类的最佳实践

- 关于自然语言处理的热门书籍

- 在 Python 中计算文本 BLEU 分数的温和介绍

- 使用编码器 - 解码器模型的用于字幕生成的注入和合并架构

- 如何用 Python 清理机器学习的文本

- 如何配置神经机器翻译的编码器 - 解码器模型

- 如何开始深度学习自然语言处理(7 天迷你课程)

- 自然语言处理的数据集

- 如何开发一种深度学习的词袋模型来预测电影评论情感

- 深度学习字幕生成模型的温和介绍

- 如何在 Keras 中定义神经机器翻译的编码器 - 解码器序列 - 序列模型

- 如何利用小实验在 Keras 中开发字幕生成模型

- 如何从头开发深度学习图片标题生成器

- 如何在 Keras 中开发基于字符的神经语言模型

- 如何开发用于情感分析的 N-gram 多通道卷积神经网络

- 如何从零开始开发神经机器翻译系统

- 如何在 Python 中用 Keras 开发基于单词的神经语言模型

- 如何开发一种预测电影评论情感的词嵌入模型

- 如何使用 Gensim 在 Python 中开发词嵌入

- 用于文本摘要的编码器 - 解码器深度学习模型

- Keras 中文本摘要的编码器 - 解码器模型

- 用于神经机器翻译的编码器 - 解码器循环神经网络模型

- 浅谈词袋模型

- 文本摘要的温和介绍

- 编码器 - 解码器循环神经网络中的注意力如何工作

- 如何利用深度学习自动生成照片的文本描述

- 如何开发一个单词级神经语言模型并用它来生成文本

- 浅谈神经机器翻译

- 什么是自然语言处理?

- 牛津自然语言处理深度学习课程

- 如何为机器翻译准备法语到英语的数据集

- 如何为情感分析准备电影评论数据

- 如何为文本摘要准备新闻文章

- 如何准备照片标题数据集以训练深度学习模型

- 如何使用 Keras 为深度学习准备文本数据

- 如何使用 scikit-learn 为机器学习准备文本数据

- 自然语言处理神经网络模型入门

- 对自然语言处理的深度学习的承诺

- 在 Python 中用 Keras 进行 LSTM 循环神经网络的序列分类

- 斯坦福自然语言处理深度学习课程评价

- 统计语言建模和神经语言模型的简要介绍

- 使用 Keras 在 Python 中进行 LSTM 循环神经网络的文本生成

- 浅谈机器学习中的转换

- 如何使用 Keras 将词嵌入层用于深度学习

- 什么是用于文本的词嵌入

- Machine Learning Mastery 深度学习时间序列教程

- 如何开发人类活动识别的一维卷积神经网络模型

- 人类活动识别的深度学习模型

- 如何评估人类活动识别的机器学习算法

- 时间序列预测的多层感知器网络探索性配置

- 比较经典和机器学习方法进行时间序列预测的结果

- 如何通过深度学习快速获得时间序列预测的结果

- 如何利用 Python 处理序列预测问题中的缺失时间步长

- 如何建立预测大气污染日的概率预测模型

- 如何开发一种熟练的机器学习时间序列预测模型

- 如何构建家庭用电自回归预测模型

- 如何开发多步空气污染时间序列预测的自回归预测模型

- 如何制定多站点多元空气污染时间序列预测的基线预测

- 如何开发时间序列预测的卷积神经网络模型

- 如何开发卷积神经网络用于多步时间序列预测

- 如何开发单变量时间序列预测的深度学习模型

- 如何开发 LSTM 模型用于家庭用电的多步时间序列预测

- 如何开发 LSTM 模型进行时间序列预测

- 如何开发多元多步空气污染时间序列预测的机器学习模型

- 如何开发多层感知器模型进行时间序列预测

- 如何开发人类活动识别时间序列分类的 RNN 模型

- 如何开始深度学习的时间序列预测(7 天迷你课程)

- 如何网格搜索深度学习模型进行时间序列预测

- 如何对单变量时间序列预测的网格搜索朴素方法

- 如何在 Python 中搜索 SARIMA 模型超参数用于时间序列预测

- 如何在 Python 中进行时间序列预测的网格搜索三次指数平滑

- 一个标准的人类活动识别问题的温和介绍

- 如何加载和探索家庭用电数据

- 如何加载,可视化和探索复杂的多变量多步时间序列预测数据集

- 如何从智能手机数据模拟人类活动

- 如何根据环境因素预测房间占用率

- 如何使用脑波预测人眼是开放还是闭合

- 如何在 Python 中扩展长短期内存网络的数据

- 如何使用 TimeseriesGenerator 进行 Keras 中的时间序列预测

- 基于机器学习算法的室内运动时间序列分类

- 用于时间序列预测的状态 LSTM 在线学习的不稳定性

- 用于罕见事件时间序列预测的 LSTM 模型体系结构

- 用于时间序列预测的 4 种通用机器学习数据变换

- Python 中长短期记忆网络的多步时间序列预测

- 家庭用电机器学习的多步时间序列预测

- Keras 中 LSTM 的多变量时间序列预测

- 如何开发和评估朴素的家庭用电量预测方法

- 如何为长短期记忆网络准备单变量时间序列数据

- 循环神经网络在时间序列预测中的应用

- 如何在 Python 中使用差异变换删除趋势和季节性

- 如何在 LSTM 中种子状态用于 Python 中的时间序列预测

- 使用 Python 进行时间序列预测的有状态和无状态 LSTM

- 长短时记忆网络在时间序列预测中的适用性

- 时间序列预测问题的分类

- Python 中长短期记忆网络的时间序列预测

- 基于 Keras 的 Python 中 LSTM 循环神经网络的时间序列预测

- Keras 中深度学习的时间序列预测

- 如何用 Keras 调整 LSTM 超参数进行时间序列预测

- 如何在时间序列预测训练期间更新 LSTM 网络

- 如何使用 LSTM 网络的 Dropout 进行时间序列预测

- 如何使用 LSTM 网络中的特征进行时间序列预测

- 如何在 LSTM 网络中使用时间序列进行时间序列预测

- 如何利用 LSTM 网络进行权重正则化进行时间序列预测

- Machine Learning Mastery 线性代数教程

- 机器学习数学符号的基础知识

- 用 NumPy 阵列轻松介绍广播

- 如何从 Python 中的 Scratch 计算主成分分析(PCA)

- 用于编码器审查的计算线性代数

- 10 机器学习中的线性代数示例

- 线性代数的温和介绍

- 用 NumPy 轻松介绍 Python 中的 N 维数组

- 机器学习向量的温和介绍

- 如何在 Python 中为机器学习索引,切片和重塑 NumPy 数组

- 机器学习的矩阵和矩阵算法简介

- 温和地介绍机器学习的特征分解,特征值和特征向量

- NumPy 对预期价值,方差和协方差的简要介绍

- 机器学习矩阵分解的温和介绍

- 用 NumPy 轻松介绍机器学习的张量

- 用于机器学习的线性代数中的矩阵类型简介

- 用于机器学习的线性代数备忘单

- 线性代数的深度学习

- 用于机器学习的线性代数(7 天迷你课程)

- 机器学习的线性代数

- 机器学习矩阵运算的温和介绍

- 线性代数评论没有废话指南

- 学习机器学习线性代数的主要资源

- 浅谈机器学习的奇异值分解

- 如何用线性代数求解线性回归

- 用于机器学习的稀疏矩阵的温和介绍

- 机器学习中向量规范的温和介绍

- 学习线性代数用于机器学习的 5 个理由

- Machine Learning Mastery LSTM 教程

- Keras中长短期记忆模型的5步生命周期

- 长短时记忆循环神经网络的注意事项

- CNN长短期记忆网络

- 逆向神经网络中的深度学习速成课程

- 可变长度输入序列的数据准备

- 如何用Keras开发用于Python序列分类的双向LSTM

- 如何开发Keras序列到序列预测的编码器 - 解码器模型

- 如何诊断LSTM模型的过度拟合和欠拟合

- 如何开发一种编码器 - 解码器模型,注重Keras中的序列到序列预测

- 编码器 - 解码器长短期存储器网络

- 神经网络中爆炸梯度的温和介绍

- 对时间反向传播的温和介绍

- 生成长短期记忆网络的温和介绍

- 专家对长短期记忆网络的简要介绍

- 在序列预测问题上充分利用LSTM

- 编辑器 - 解码器循环神经网络全局注意的温和介绍

- 如何利用长短时记忆循环神经网络处理很长的序列

- 如何在Python中对一个热编码序列数据

- 如何使用编码器 - 解码器LSTM来回显随机整数序列

- 具有注意力的编码器 - 解码器RNN体系结构的实现模式

- 学习使用编码器解码器LSTM循环神经网络添加数字

- 如何学习长短时记忆循环神经网络回声随机整数

- 具有Keras的长短期记忆循环神经网络的迷你课程

- LSTM自动编码器的温和介绍

- 如何用Keras中的长短期记忆模型进行预测

- 用Python中的长短期内存网络演示内存

- 基于循环神经网络的序列预测模型的简要介绍

- 深度学习的循环神经网络算法之旅

- 如何重塑Keras中长短期存储网络的输入数据

- 了解Keras中LSTM的返回序列和返回状态之间的差异

- RNN展开的温和介绍

- 5学习LSTM循环神经网络的简单序列预测问题的例子

- 使用序列进行预测

- 堆叠长短期内存网络

- 什么是教师强制循环神经网络?

- 如何在Python中使用TimeDistributed Layer for Long Short-Term Memory Networks

- 如何准备Keras中截断反向传播的序列预测

- 如何在使用LSTM进行训练和预测时使用不同的批量大小

- Machine Learning Mastery 机器学习算法教程

- 机器学习算法之旅

- 用于机器学习的装袋和随机森林集合算法

- 从头开始实施机器学习算法的好处

- 更好的朴素贝叶斯:从朴素贝叶斯算法中获取最多的12个技巧

- 机器学习的提升和AdaBoost

- 选择机器学习算法:Microsoft Azure的经验教训

- 机器学习的分类和回归树

- 什么是机器学习中的混淆矩阵

- 如何使用Python从头开始创建算法测试工具

- 通过创建机器学习算法的目标列表来控制

- 从头开始停止编码机器学习算法

- 在实现机器学习算法时,不要从开源代码开始

- 不要使用随机猜测作为基线分类器

- 浅谈机器学习中的概念漂移

- 温和介绍机器学习中的偏差 - 方差权衡

- 机器学习的梯度下降

- 机器学习算法如何工作(他们学习输入到输出的映射)

- 如何建立机器学习算法的直觉

- 如何实现机器学习算法

- 如何研究机器学习算法行为

- 如何学习机器学习算法

- 如何研究机器学习算法

- 如何研究机器学习算法

- 如何在Python中从头开始实现反向传播算法

- 如何用Python从头开始实现Bagging

- 如何用Python从头开始实现基线机器学习算法

- 如何在Python中从头开始实现决策树算法

- 如何用Python从头开始实现学习向量量化

- 如何利用Python从头开始随机梯度下降实现线性回归

- 如何利用Python从头开始随机梯度下降实现Logistic回归

- 如何用Python从头开始实现机器学习算法表现指标

- 如何在Python中从头开始实现感知器算法

- 如何在Python中从零开始实现随机森林

- 如何在Python中从头开始实现重采样方法

- 如何用Python从头开始实现简单线性回归

- 如何用Python从头开始实现堆栈泛化(Stacking)

- K-Nearest Neighbors for Machine Learning

- 学习机器学习的向量量化

- 机器学习的线性判别分析

- 机器学习的线性回归

- 使用梯度下降进行机器学习的线性回归教程

- 如何在Python中从头开始加载机器学习数据

- 机器学习的Logistic回归

- 机器学习的Logistic回归教程

- 机器学习算法迷你课程

- 如何在Python中从头开始实现朴素贝叶斯

- 朴素贝叶斯机器学习

- 朴素贝叶斯机器学习教程

- 机器学习算法的过拟合和欠拟合

- 参数化和非参数机器学习算法

- 理解任何机器学习算法的6个问题

- 在机器学习中拥抱随机性

- 如何使用Python从头开始扩展机器学习数据

- 机器学习的简单线性回归教程

- 有监督和无监督的机器学习算法

- 用于机器学习的支持向量机

- 在没有数学背景的情况下理解机器学习算法的5种技术

- 最好的机器学习算法

- 教程从头开始在Python中实现k-Nearest Neighbors

- 通过从零开始实现它们来理解机器学习算法(以及绕过坏代码的策略)

- 使用随机森林:在121个数据集上测试179个分类器

- 为什么从零开始实现机器学习算法

- Machine Learning Mastery 机器学习入门教程

- 机器学习入门的四个步骤:初学者入门与实践的自上而下策略

- 你应该培养的 5 个机器学习领域

- 一种选择机器学习算法的数据驱动方法

- 机器学习中的分析与数值解

- 应用机器学习是一种精英政治

- 机器学习的基本概念

- 如何成为数据科学家

- 初学者如何在机器学习中弄错

- 机器学习的最佳编程语言

- 构建机器学习组合

- 机器学习中分类与回归的区别

- 评估自己作为数据科学家并利用结果建立惊人的数据科学团队

- 探索 Kaggle 大师的方法论和心态:对 Diogo Ferreira 的采访

- 扩展机器学习工具并展示掌握

- 通过寻找地标开始机器学习

- 温和地介绍预测建模

- 通过提供结果在机器学习中获得梦想的工作

- 如何开始机器学习:自学蓝图

- 开始并在机器学习方面取得进展

- 应用机器学习的 Hello World

- 初学者如何使用小型项目开始机器学习并在 Kaggle 上进行竞争

- 我如何开始机器学习? (简短版)

- 我是如何开始机器学习的

- 如何在机器学习中取得更好的成绩

- 如何从在银行工作到担任 Target 的高级数据科学家

- 如何学习任何机器学习工具

- 使用小型目标项目深入了解机器学习工具

- 获得付费申请机器学习

- 映射机器学习工具的景观

- 机器学习开发环境

- 机器学习金钱

- 程序员的机器学习

- 机器学习很有意思

- 机器学习是 Kaggle 比赛

- 机器学习现在很受欢迎

- 机器学习掌握方法

- 机器学习很重要

- 机器学习 Q&amp; A:概念漂移,更好的结果和学习更快

- 缺乏自学机器学习的路线图

- 机器学习很重要

- 快速了解任何机器学习工具(即使您是初学者)

- 机器学习工具

- 找到你的机器学习部落

- 机器学习在一年

- 通过竞争一致的大师 Kaggle

- 5 程序员在机器学习中开始犯错误

- 哲学毕业生到机器学习从业者(Brian Thomas 采访)

- 机器学习入门的实用建议

- 实用机器学习问题

- 使用来自 UCI 机器学习库的数据集练习机器学习

- 使用秘籍的任何机器学习工具快速启动

- 程序员可以进入机器学习

- 程序员应该进入机器学习

- 项目焦点:Shashank Singh 的人脸识别

- 项目焦点:使用 Mahout 和 Konstantin Slisenko 进行堆栈交换群集

- 机器学习自学指南

- 4 个自学机器学习项目

- ÁlvaroLemos 如何在数据科学团队中获得机器学习实习

- 如何思考机器学习

- 现实世界机器学习问题之旅

- 有关机器学习的有用知识

- 如果我没有学位怎么办?

- 如果我不是一个优秀的程序员怎么办?

- 如果我不擅长数学怎么办?

- 为什么机器学习算法会处理以前从未见过的数据?

- 是什么阻碍了你的机器学习目标?

- 什么是机器学习?

- 机器学习适合哪里?

- 为什么要进入机器学习?

- 研究对您来说很重要的机器学习问题

- 你这样做是错的。为什么机器学习不必如此困难

- Machine Learning Mastery Sklearn 教程

- Scikit-Learn 的温和介绍:Python 机器学习库

- 使用 Python 管道和 scikit-learn 自动化机器学习工作流程

- 如何以及何时使用带有 scikit-learn 的校准分类模型

- 如何比较 Python 中的机器学习算法与 scikit-learn

- 用于机器学习开发人员的 Python 崩溃课程

- 用 scikit-learn 在 Python 中集成机器学习算法

- 使用重采样评估 Python 中机器学习算法的表现

- 使用 Scikit-Learn 在 Python 中进行特征选择

- Python 中机器学习的特征选择

- 如何使用 scikit-learn 在 Python 中生成测试数据集

- scikit-learn 中的机器学习算法秘籍

- 如何使用 Python 处理丢失的数据

- 如何开始使用 Python 进行机器学习

- 如何使用 Scikit-Learn 在 Python 中加载数据

- Python 中概率评分方法的简要介绍

- 如何用 Scikit-Learn 调整算法参数

- 如何在 Mac OS X 上安装 Python 3 环境以进行机器学习和深度学习

- 使用 scikit-learn 进行机器学习简介

- 从 shell 到一本带有 Fernando Perez 单一工具的书的 IPython

- 如何使用 Python 3 为机器学习开发创建 Linux 虚拟机

- 如何在 Python 中加载机器学习数据

- 您在 Python 中的第一个机器学习项目循序渐进

- 如何使用 scikit-learn 进行预测

- 用于评估 Python 中机器学习算法的度量标准

- 使用 Pandas 为 Python 中的机器学习准备数据

- 如何使用 Scikit-Learn 为 Python 机器学习准备数据

- 项目焦点:使用 Artem Yankov 在 Python 中进行事件推荐

- 用于机器学习的 Python 生态系统

- Python 是应用机器学习的成长平台

- Python 机器学习书籍

- Python 机器学习迷你课程

- 使用 Pandas 快速和肮脏的数据分析

- 使用 Scikit-Learn 重新调整 Python 中的机器学习数据

- 如何以及何时使用 ROC 曲线和精确调用曲线进行 Python 分类

- 使用 scikit-learn 在 Python 中保存和加载机器学习模型

- scikit-learn Cookbook 书评

- 如何使用 Anaconda 为机器学习和深度学习设置 Python 环境

- 使用 scikit-learn 在 Python 中进行 Spot-Check 分类机器学习算法

- 如何在 Python 中开发可重复使用的抽样检查算法框架

- 使用 scikit-learn 在 Python 中进行 Spot-Check 回归机器学习算法

- 使用 Python 中的描述性统计来了解您的机器学习数据

- 使用 OpenCV,Python 和模板匹配来播放“哪里是 Waldo?”

- 使用 Pandas 在 Python 中可视化机器学习数据

- Machine Learning Mastery 统计学教程

- 浅谈计算正态汇总统计量

- 非参数统计的温和介绍

- Python中常态测试的温和介绍

- 浅谈Bootstrap方法

- 浅谈机器学习的中心极限定理

- 浅谈机器学习中的大数定律

- 机器学习的所有统计数据

- 如何计算Python中机器学习结果的Bootstrap置信区间

- 浅谈机器学习的Chi-Squared测试

- 机器学习的置信区间

- 随机化在机器学习中解决混杂变量的作用

- 机器学习中的受控实验

- 机器学习统计学速成班

- 统计假设检验的关键值以及如何在Python中计算它们

- 如何在机器学习中谈论数据(统计学和计算机科学术语)

- Python中数据可视化方法的简要介绍

- Python中效果大小度量的温和介绍

- 估计随机机器学习算法的实验重复次数

- 机器学习评估统计的温和介绍

- 如何计算Python中的非参数秩相关性

- 如何在Python中计算数据的5位数摘要

- 如何在Python中从头开始编写学生t检验

- 如何在Python中生成随机数

- 如何转换数据以更好地拟合正态分布

- 如何使用相关来理解变量之间的关系

- 如何使用统计信息识别数据中的异常值

- 用于Python机器学习的随机数生成器简介

- k-fold交叉验证的温和介绍

- 如何计算McNemar的比较两种机器学习量词的测试

- Python中非参数统计显着性测试简介

- 如何在Python中使用参数统计显着性测试

- 机器学习的预测间隔

- 应用统计学与机器学习的密切关系

- 如何使用置信区间报告分类器表现

- 统计数据分布的简要介绍

- 15 Python中的统计假设检验(备忘单)

- 统计假设检验的温和介绍

- 10如何在机器学习项目中使用统计方法的示例

- Python中统计功效和功耗分析的简要介绍

- 统计抽样和重新抽样的简要介绍

- 比较机器学习算法的统计显着性检验

- 机器学习中统计容差区间的温和介绍

- 机器学习统计书籍

- 评估机器学习模型的统计数据

- 机器学习统计(7天迷你课程)

- 用于机器学习的简明英语统计

- 如何使用统计显着性检验来解释机器学习结果

- 什么是统计(为什么它在机器学习中很重要)?

- Machine Learning Mastery 时间序列入门教程

- 如何在 Python 中为时间序列预测创建 ARIMA 模型

- 用 Python 进行时间序列预测的自回归模型

- 如何回溯机器学习模型的时间序列预测

- Python 中基于时间序列数据的基本特征工程

- R 的时间序列预测热门书籍

- 10 挑战机器学习时间序列预测问题

- 如何将时间序列转换为 Python 中的监督学习问题

- 如何将时间序列数据分解为趋势和季节性

- 如何用 ARCH 和 GARCH 模拟波动率进行时间序列预测

- 如何将时间序列数据集与 Python 区分开来

- Python 中时间序列预测的指数平滑的温和介绍

- 用 Python 进行时间序列预测的特征选择

- 浅谈自相关和部分自相关

- 时间序列预测的 Box-Jenkins 方法简介

- 用 Python 简要介绍时间序列的时间序列预测

- 如何使用 Python 网格搜索 ARIMA 模型超参数

- 如何在 Python 中加载和探索时间序列数据

- 如何使用 Python 对 ARIMA 模型进行手动预测

- 如何用 Python 进行时间序列预测的预测

- 如何使用 Python 中的 ARIMA 进行样本外预测

- 如何利用 Python 模拟残差错误来纠正时间序列预测

- 使用 Python 进行数据准备,特征工程和时间序列预测的移动平均平滑

- 多步时间序列预测的 4 种策略

- 如何在 Python 中规范化和标准化时间序列数据

- 如何利用 Python 进行时间序列预测的基线预测

- 如何使用 Python 对时间序列预测数据进行功率变换

- 用于时间序列预测的 Python 环境

- 如何重构时间序列预测问题

- 如何使用 Python 重新采样和插值您的时间序列数据

- 用 Python 编写 SARIMA 时间序列预测

- 如何在 Python 中保存 ARIMA 时间序列预测模型

- 使用 Python 进行季节性持久性预测

- 基于 ARIMA 的 Python 历史规模敏感性预测技巧分析

- 简单的时间序列预测模型进行测试,这样你就不会欺骗自己

- 标准多变量,多步骤和多站点时间序列预测问题

- 如何使用 Python 检查时间序列数据是否是固定的

- 使用 Python 进行时间序列数据可视化

- 7 个机器学习的时间序列数据集

- 时间序列预测案例研究与 Python:波士顿每月武装抢劫案

- Python 的时间序列预测案例研究:巴尔的摩的年度用水量

- 使用 Python 进行时间序列预测研究:法国香槟的月销售额

- 使用 Python 的置信区间理解时间序列预测不确定性

- 11 Python 中的经典时间序列预测方法(备忘单)

- 使用 Python 进行时间序列预测表现测量

- 使用 Python 7 天迷你课程进行时间序列预测

- 时间序列预测作为监督学习

- 什么是时间序列预测?

- 如何使用 Python 识别和删除时间序列数据的季节性

- 如何在 Python 中使用和删除时间序列数据中的趋势信息

- 如何在 Python 中调整 ARIMA 参数

- 如何用 Python 可视化时间序列残差预测错误

- 白噪声时间序列与 Python

- 如何通过时间序列预测项目

- Machine Learning Mastery XGBoost 教程

- 通过在 Python 中使用 XGBoost 提前停止来避免过度拟合

- 如何在 Python 中调优 XGBoost 的多线程支持

- 如何配置梯度提升算法

- 在 Python 中使用 XGBoost 进行梯度提升的数据准备

- 如何使用 scikit-learn 在 Python 中开发您的第一个 XGBoost 模型

- 如何在 Python 中使用 XGBoost 评估梯度提升模型

- 在 Python 中使用 XGBoost 的特征重要性和特征选择

- 浅谈机器学习的梯度提升算法

- 应用机器学习的 XGBoost 简介

- 如何在 macOS 上为 Python 安装 XGBoost

- 如何在 Python 中使用 XGBoost 保存梯度提升模型

- 从梯度提升开始,比较 165 个数据集上的 13 种算法

- 在 Python 中使用 XGBoost 和 scikit-learn 进行随机梯度提升

- 如何使用 Amazon Web Services 在云中训练 XGBoost 模型

- 在 Python 中使用 XGBoost 调整梯度提升的学习率

- 如何在 Python 中使用 XGBoost 调整决策树的数量和大小

- 如何在 Python 中使用 XGBoost 可视化梯度提升决策树

- 在 Python 中开始使用 XGBoost 的 7 步迷你课程