# TensorFlow 中的变分自编码器

变分自编码器是自编码器的现代生成版本。让我们为同一个前面的问题构建一个变分自编码器。我们将通过提供来自原始和嘈杂测试集的图像来测试自编码器。

我们将使用不同的编码风格来构建此自编码器,以便使用 TensorFlow 演示不同的编码风格:

1. 首先定义超参数:

```py

learning_rate = 0.001

n_epochs = 20

batch_size = 100

n_batches = int(mnist.train.num_examples/batch_size)

# number of pixels in the MNIST image as number of inputs

n_inputs = 784

n_outputs = n_inputs

```

1. 接下来,定义参数字典以保存权重和偏差参数:

```py

params={}

```

1. 定义每个编码器和解码器中隐藏层的数量:

```py

n_layers = 2

# neurons in each hidden layer

n_neurons = [512,256]

```

1. 变分编码器中的新增加是我们定义潜变量`z`的维数:

```py

n_neurons_z = 128 # the dimensions of latent variables

```

1. 我们使用激活`tanh`:

```py

activation = tf.nn.tanh

```

1. 定义输入和输出占位符:

```py

x = tf.placeholder(dtype=tf.float32, name="x",

shape=[None, n_inputs])

y = tf.placeholder(dtype=tf.float32, name="y",

shape=[None, n_outputs])

```

1. 定义输入层:

```py

# x is input layer

layer = x

```

1. 定义编码器网络的偏差和权重并添加层。变分自编码器的编码器网络也称为识别网络或推理网络或概率编码器网络:

```py

for i in range(0,n_layers):

name="w_e_{0:04d}".format(i)

params[name] = tf.get_variable(name=name,

shape=[n_inputs if i==0 else n_neurons[i-1],

n_neurons[i]],

initializer=tf.glorot_uniform_initializer()

)

name="b_e_{0:04d}".format(i)

params[name] = tf.Variable(tf.zeros([n_neurons[i]]),

name=name

)

layer = activation(tf.matmul(layer,

params["w_e_{0:04d}".format(i)]

) + params["b_e_{0:04d}".format(i)]

)

```

1. 接下来,添加潜在变量的均值和方差的层:

```py

name="w_e_z_mean"

params[name] = tf.get_variable(name=name,

shape=[n_neurons[n_layers-1], n_neurons_z],

initializer=tf.glorot_uniform_initializer()

)

name="b_e_z_mean"

params[name] = tf.Variable(tf.zeros([n_neurons_z]),

name=name

)

z_mean = tf.matmul(layer, params["w_e_z_mean"]) +

params["b_e_z_mean"]

name="w_e_z_log_var"

params[name] = tf.get_variable(name=name,

shape=[n_neurons[n_layers-1], n_neurons_z],

initializer=tf.glorot_uniform_initializer()

)

name="b_e_z_log_var"

params[name] = tf.Variable(tf.zeros([n_neurons_z]),

name="b_e_z_log_var"

)

z_log_var = tf.matmul(layer, params["w_e_z_log_var"]) +

params["b_e_z_log_var"]

```

1. 接下来,定义表示与`z`方差的变量相同形状的噪声分布的 epsilon 变量:

```py

epsilon = tf.random_normal(tf.shape(z_log_var),

mean=0,

stddev=1.0,

dtype=tf.float32,

name='epsilon'

)

```

1. 根据均值,对数方差和噪声定义后验分布:

```py

z = z_mean + tf.exp(z_log_var * 0.5) * epsilon

```

1. 接下来,定义解码器网络的权重和偏差,并添加解码器层。变分自编码器中的解码器网络也称为概率解码器或生成器网络。

```py

# add generator / probablistic decoder network parameters and layers

layer = z

for i in range(n_layers-1,-1,-1):

name="w_d_{0:04d}".format(i)

params[name] = tf.get_variable(name=name,

shape=[n_neurons_z if i==n_layers-1 else n_neurons[i+1],

n_neurons[i]],

initializer=tf.glorot_uniform_initializer()

)

name="b_d_{0:04d}".format(i)

params[name] = tf.Variable(tf.zeros([n_neurons[i]]),

name=name

)

layer = activation(tf.matmul(layer, params["w_d_{0:04d}".format(i)]) +

params["b_d_{0:04d}".format(i)])

```

1. 最后,定义输出层:

```py

name="w_d_z_mean"

params[name] = tf.get_variable(name=name,

shape=[n_neurons[0],n_outputs],

initializer=tf.glorot_uniform_initializer()

)

name="b_d_z_mean"

params[name] = tf.Variable(tf.zeros([n_outputs]),

name=name

)

name="w_d_z_log_var"

params[name] = tf.Variable(tf.random_normal([n_neurons[0],

n_outputs]),

name=name

)

name="b_d_z_log_var"

params[name] = tf.Variable(tf.zeros([n_outputs]),

name=name

)

layer = tf.nn.sigmoid(tf.matmul(layer, params["w_d_z_mean"]) +

params["b_d_z_mean"])

model = layer

```

1. 在变异自编码器中,我们有重建损失和正则化损失。将损失函数定义为重建损失和正则化损失的总和:

```py

rec_loss = -tf.reduce_sum(y * tf.log(1e-10 + model) + (1-y)

* tf.log(1e-10 + 1 - model), 1)

reg_loss = -0.5*tf.reduce_sum(1 + z_log_var - tf.square(z_mean)

- tf.exp(z_log_var), 1)

loss = tf.reduce_mean(rec_loss+reg_loss)

```

1. 根据`AdapOptimizer`定义优化程序函数:

```py

optimizer = tf.train.AdamOptimizer(learning_rate=learning_rate)

.minimize(loss)

```

1. 现在让我们训练模型并从非噪声和噪声测试图像生成图像:

```py

with tf.Session() as tfs:

tf.global_variables_initializer().run()

for epoch in range(n_epochs):

epoch_loss = 0.0

for batch in range(n_batches):

X_batch, _ = mnist.train.next_batch(batch_size)

feed_dict={x: X_batch,y: X_batch}

_,batch_loss = tfs.run([optimizer,loss],

feed_dict=feed_dict)

epoch_loss += batch_loss

if (epoch%10==9) or (epoch==0):

average_loss = epoch_loss / n_batches

print("epoch: {0:04d} loss = {1:0.6f}"

.format(epoch,average_loss))

# predict images using autoencoder model trained

Y_test_pred1 = tfs.run(model, feed_dict={x: test_images})

Y_test_pred2 = tfs.run(model, feed_dict={x: test_images_noisy})

```

我们得到以下输出:

```py

epoch: 0000 loss = 180.444682

epoch: 0009 loss = 106.817749

epoch: 0019 loss = 102.580904

```

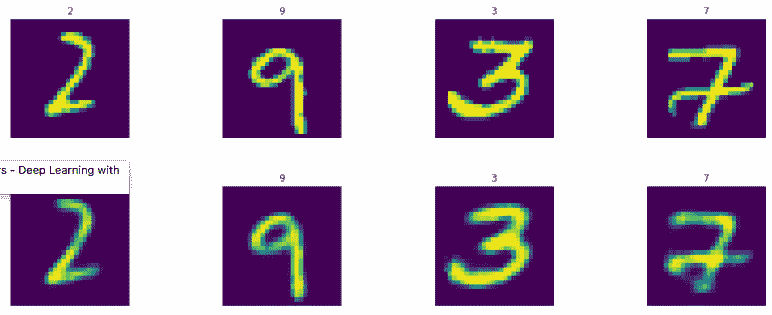

现在让我们显示图像:

```py

display_images(test_images.reshape(-1,pixel_size,pixel_size),test_labels)

display_images(Y_test_pred1.reshape(-1,pixel_size,pixel_size),test_labels)

```

结果如下:

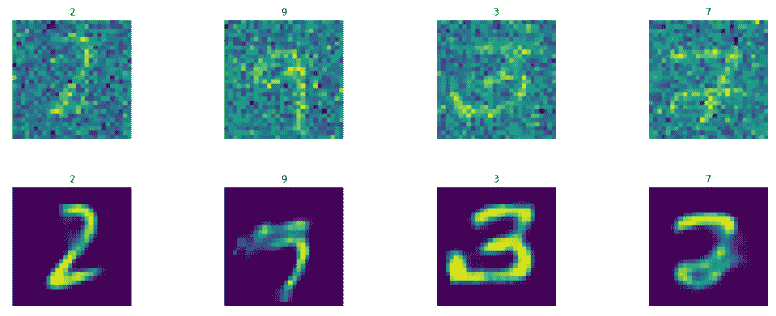

```py

display_images(test_images_noisy.reshape(-1,pixel_size,pixel_size),

test_labels)

display_images(Y_test_pred2.reshape(-1,pixel_size,pixel_size),test_labels)

```

结果如下:

同样,可以通过超参数调整和增加学习量来改善结果。

- TensorFlow 101

- 什么是 TensorFlow?

- TensorFlow 核心

- 代码预热 - Hello TensorFlow

- 张量

- 常量

- 操作

- 占位符

- 从 Python 对象创建张量

- 变量

- 从库函数生成的张量

- 使用相同的值填充张量元素

- 用序列填充张量元素

- 使用随机分布填充张量元素

- 使用tf.get_variable()获取变量

- 数据流图或计算图

- 执行顺序和延迟加载

- 跨计算设备执行图 - CPU 和 GPU

- 将图节点放置在特定的计算设备上

- 简单放置

- 动态展示位置

- 软放置

- GPU 内存处理

- 多个图

- TensorBoard

- TensorBoard 最小的例子

- TensorBoard 详情

- 总结

- TensorFlow 的高级库

- TF Estimator - 以前的 TF 学习

- TF Slim

- TFLearn

- 创建 TFLearn 层

- TFLearn 核心层

- TFLearn 卷积层

- TFLearn 循环层

- TFLearn 正则化层

- TFLearn 嵌入层

- TFLearn 合并层

- TFLearn 估计层

- 创建 TFLearn 模型

- TFLearn 模型的类型

- 训练 TFLearn 模型

- 使用 TFLearn 模型

- PrettyTensor

- Sonnet

- 总结

- Keras 101

- 安装 Keras

- Keras 中的神经网络模型

- 在 Keras 建立模型的工作流程

- 创建 Keras 模型

- 用于创建 Keras 模型的顺序 API

- 用于创建 Keras 模型的函数式 API

- Keras 层

- Keras 核心层

- Keras 卷积层

- Keras 池化层

- Keras 本地连接层

- Keras 循环层

- Keras 嵌入层

- Keras 合并层

- Keras 高级激活层

- Keras 正则化层

- Keras 噪音层

- 将层添加到 Keras 模型

- 用于将层添加到 Keras 模型的顺序 API

- 用于向 Keras 模型添加层的函数式 API

- 编译 Keras 模型

- 训练 Keras 模型

- 使用 Keras 模型进行预测

- Keras 的附加模块

- MNIST 数据集的 Keras 序列模型示例

- 总结

- 使用 TensorFlow 进行经典机器学习

- 简单的线性回归

- 数据准备

- 构建一个简单的回归模型

- 定义输入,参数和其他变量

- 定义模型

- 定义损失函数

- 定义优化器函数

- 训练模型

- 使用训练的模型进行预测

- 多元回归

- 正则化回归

- 套索正则化

- 岭正则化

- ElasticNet 正则化

- 使用逻辑回归进行分类

- 二分类的逻辑回归

- 多类分类的逻辑回归

- 二分类

- 多类分类

- 总结

- 使用 TensorFlow 和 Keras 的神经网络和 MLP

- 感知机

- 多层感知机

- 用于图像分类的 MLP

- 用于 MNIST 分类的基于 TensorFlow 的 MLP

- 用于 MNIST 分类的基于 Keras 的 MLP

- 用于 MNIST 分类的基于 TFLearn 的 MLP

- 使用 TensorFlow,Keras 和 TFLearn 的 MLP 总结

- 用于时间序列回归的 MLP

- 总结

- 使用 TensorFlow 和 Keras 的 RNN

- 简单循环神经网络

- RNN 变种

- LSTM 网络

- GRU 网络

- TensorFlow RNN

- TensorFlow RNN 单元类

- TensorFlow RNN 模型构建类

- TensorFlow RNN 单元包装器类

- 适用于 RNN 的 Keras

- RNN 的应用领域

- 用于 MNIST 数据的 Keras 中的 RNN

- 总结

- 使用 TensorFlow 和 Keras 的时间序列数据的 RNN

- 航空公司乘客数据集

- 加载 airpass 数据集

- 可视化 airpass 数据集

- 使用 TensorFlow RNN 模型预处理数据集

- TensorFlow 中的简单 RNN

- TensorFlow 中的 LSTM

- TensorFlow 中的 GRU

- 使用 Keras RNN 模型预处理数据集

- 使用 Keras 的简单 RNN

- 使用 Keras 的 LSTM

- 使用 Keras 的 GRU

- 总结

- 使用 TensorFlow 和 Keras 的文本数据的 RNN

- 词向量表示

- 为 word2vec 模型准备数据

- 加载和准备 PTB 数据集

- 加载和准备 text8 数据集

- 准备小验证集

- 使用 TensorFlow 的 skip-gram 模型

- 使用 t-SNE 可视化单词嵌入

- keras 的 skip-gram 模型

- 使用 TensorFlow 和 Keras 中的 RNN 模型生成文本

- TensorFlow 中的 LSTM 文本生成

- Keras 中的 LSTM 文本生成

- 总结

- 使用 TensorFlow 和 Keras 的 CNN

- 理解卷积

- 了解池化

- CNN 架构模式 - LeNet

- 用于 MNIST 数据的 LeNet

- 使用 TensorFlow 的用于 MNIST 的 LeNet CNN

- 使用 Keras 的用于 MNIST 的 LeNet CNN

- 用于 CIFAR10 数据的 LeNet

- 使用 TensorFlow 的用于 CIFAR10 的 ConvNets

- 使用 Keras 的用于 CIFAR10 的 ConvNets

- 总结

- 使用 TensorFlow 和 Keras 的自编码器

- 自编码器类型

- TensorFlow 中的栈式自编码器

- Keras 中的栈式自编码器

- TensorFlow 中的去噪自编码器

- Keras 中的去噪自编码器

- TensorFlow 中的变分自编码器

- Keras 中的变分自编码器

- 总结

- TF 服务:生产中的 TensorFlow 模型

- 在 TensorFlow 中保存和恢复模型

- 使用保护程序类保存和恢复所有图变量

- 使用保护程序类保存和恢复所选变量

- 保存和恢复 Keras 模型

- TensorFlow 服务

- 安装 TF 服务

- 保存 TF 服务的模型

- 提供 TF 服务模型

- 在 Docker 容器中提供 TF 服务

- 安装 Docker

- 为 TF 服务构建 Docker 镜像

- 在 Docker 容器中提供模型

- Kubernetes 中的 TensorFlow 服务

- 安装 Kubernetes

- 将 Docker 镜像上传到 dockerhub

- 在 Kubernetes 部署

- 总结

- 迁移学习和预训练模型

- ImageNet 数据集

- 再训练或微调模型

- COCO 动物数据集和预处理图像

- TensorFlow 中的 VGG16

- 使用 TensorFlow 中预训练的 VGG16 进行图像分类

- TensorFlow 中的图像预处理,用于预训练的 VGG16

- 使用 TensorFlow 中的再训练的 VGG16 进行图像分类

- Keras 的 VGG16

- 使用 Keras 中预训练的 VGG16 进行图像分类

- 使用 Keras 中再训练的 VGG16 进行图像分类

- TensorFlow 中的 Inception v3

- 使用 TensorFlow 中的 Inception v3 进行图像分类

- 使用 TensorFlow 中的再训练的 Inception v3 进行图像分类

- 总结

- 深度强化学习

- OpenAI Gym 101

- 将简单的策略应用于 cartpole 游戏

- 强化学习 101

- Q 函数(在模型不可用时学习优化)

- RL 算法的探索与开发

- V 函数(模型可用时学习优化)

- 强化学习技巧

- 强化学习的朴素神经网络策略

- 实现 Q-Learning

- Q-Learning 的初始化和离散化

- 使用 Q-Table 进行 Q-Learning

- Q-Network 或深 Q 网络(DQN)的 Q-Learning

- 总结

- 生成性对抗网络

- 生成性对抗网络 101

- 建立和训练 GAN 的最佳实践

- 使用 TensorFlow 的简单的 GAN

- 使用 Keras 的简单的 GAN

- 使用 TensorFlow 和 Keras 的深度卷积 GAN

- 总结

- 使用 TensorFlow 集群的分布式模型

- 分布式执行策略

- TensorFlow 集群

- 定义集群规范

- 创建服务器实例

- 定义服务器和设备之间的参数和操作

- 定义并训练图以进行异步更新

- 定义并训练图以进行同步更新

- 总结

- 移动和嵌入式平台上的 TensorFlow 模型

- 移动平台上的 TensorFlow

- Android 应用中的 TF Mobile

- Android 上的 TF Mobile 演示

- iOS 应用中的 TF Mobile

- iOS 上的 TF Mobile 演示

- TensorFlow Lite

- Android 上的 TF Lite 演示

- iOS 上的 TF Lite 演示

- 总结

- R 中的 TensorFlow 和 Keras

- 在 R 中安装 TensorFlow 和 Keras 软件包

- R 中的 TF 核心 API

- R 中的 TF 估计器 API

- R 中的 Keras API

- R 中的 TensorBoard

- R 中的 tfruns 包

- 总结

- 调试 TensorFlow 模型

- 使用tf.Session.run()获取张量值

- 使用tf.Print()打印张量值

- 用tf.Assert()断言条件

- 使用 TensorFlow 调试器(tfdbg)进行调试

- 总结

- 张量处理单元